Location

US

US

Badges

Activity

Challenge Categories

Challenges Entered

Multi-Agent RL for Trains

Latest submissions

Measure sample efficiency and generalization in reinforcement learning using procedurally generated environments

Latest submissions

Multi-Agent Reinforcement Learning on Trains

Latest submissions

See All| failed | 104806 | ||

| graded | 104805 | ||

| graded | 103370 |

Multi-agent RL in game environment. Train your Derklings, creatures with a neural network brain, to fight for you!

Latest submissions

Multi Agent Reinforcement Learning on Trains.

Latest submissions

Multi-Agent Reinforcement Learning on Trains

Latest submissions

| Participant | Rating |

|---|---|

shivam.agarwal

shivam.agarwal

|

0 |

| Participant | Rating |

|---|

-

An_Old_Driver Flatland ChallengeView

-

An_Old_Driver FlatlandView

-

An_Old_Driver Flatland 3View

Flatland

Flatland Challenge

Is this an error in the local evaluation envs?

Over 6 years agoHello,

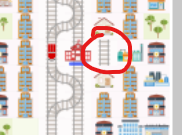

When I was doing the local evaluation, I found some weird railways on the map. For example,

When I tried to use env.rail to obtain the railway information, this small railway (not connected to anything) also appeared in the returned information.

Is this a mistake, or I am supposed to handle this kind of unconnected railways?

Best wishes.

Can we still submit for round 1?

Over 6 years agoHi @mlerik,

I don’t have the exact answer now. I will try to use the updated flatland library with the local evaluation first and then try the round 2 submission. I will let you know once I have the results.

Many thanks.

Can we still submit for round 1?

Over 6 years agoHello @mlerik,

We are using Flatland v 2.0.0

When doing the local evaluation, the program threw an exception saying that the “remote and local reward are diverging.”. I have checked our code and confirmed that our code didn’t change anything in the local environment. And our code can solve all the local copies correctly. I couldn’t figure out what caused the exception.

Then I read all the library code, including “service.py” and “client.py” (Flatland v2.0.0). It seems that the agents in the local copy and remote copy are having different start locations and target locations.

The reason for it is that, the env.reset() function (without any parameters passed to the function. i.e. using the default parameter values, regen_rail = True, replace_agents = True, according to your “rail_env.py”) is called whenever the remote environment is trying to pass a local copy to our code.

To see whether this reset() function would change the agent’s start and target locations, I wrote a short experiment code as follows:

- create a simple railway environment with complex_rail_generator

- Initialize a rendering tool to display the rail_environment

- a while loop:

3.1. call env.reset()

3.2. display the map via Render tool

It turns out that the agent’s start and target locations are changing whenever the env.reset() is called.

Thus, I am thinking that, if I am correct, the local copy and the remote copy are actually having different agent start and target locations. And our code gets the information from the local copy, and therefore it can produce actions that are correct for the local copy but not for the remote copy (due to different start and target locations).

Also, in your latest version of Flatland library 2.1.3, I noticed that the corresponding env.reset() function in the service.py and client.py (Flatland 2.0.0) had been changed to

self.env.reset(

regen_rail=False,

replace_agents=False,

activate_agents=False,

random_seed=RANDOM_SEED

)

So I am wondering whether it was a bug in Flatland 2.0.0 and it got fixed in Flatland 2.1.3.

I made the above assumption by only reading your code so that I might be wrong. If you don’t mind, could you tell me whether I am correct about this or not? I couldn’t figure out what caused the reward diverging exception when our code can find the correct path and actions for all the local copies of the railway environments.

This is the main reason why I still want to submit for round 1. Another reason is that we want to test our environment setting for the repo2docker, and check whether it works.

Many thanks.

Flatland challenge website "My Team" button leading to wrong link

Almost 6 years agoHello,

I participated in the 2019 challenge, and I am using the same team name for the 2020 challenge. But this time, we have different team members.

“My Team” button on the website takes me to the old 2019 flatland challenge team instead of the new 2020 challenge team.

When I click it, it goes to “https://www.aicrowd.com/challenges/flatland-challenge/teams/my_team_name” instead of “https://www.aicrowd.com/challenges/neurips-2020-flatland-challenge/teams/my_team_name”

Would this be a problem for participating in this challenge? I cannot delete the old team.

Best wishes.