0 Follower

0 Following

s-abramov

Location

IL

IL

Badges

0

0

0

Activity

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

Mon

Wed

Fri

Challenge Categories

Loading...

Challenges Entered

Understand semantic segmentation and monocular depth estimation from downward-facing drone images

Latest submissions

A benchmark for image-based food recognition

Latest submissions

Airborne Object Tracking Challenge

Latest submissions

See All| failed | 153695 | ||

| failed | 153694 | ||

| failed | 153693 |

Estimate depth in aerial images from monocular downward-facing drone

Latest submissions

| Participant | Rating |

|---|

| Participant | Rating |

|---|

s-abramov has not joined any teams yet...

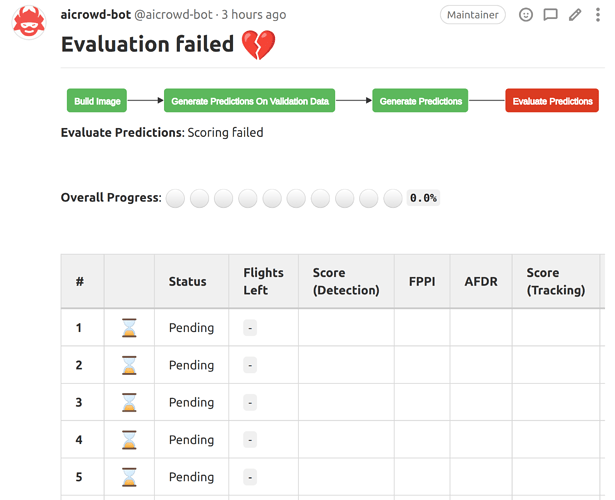

Airborne Object Tracking Challenge

Evaluate predictions: Scoring failed

Over 4 years agoHi,

Got an error in evaluate predictions stage.

All classes are converted to json serializable

How can I troubleshoot this issue?

CUDA OOM in generate predictions stage

Over 4 years agoGot CUDA OOM in generate prediction stage:

RuntimeError: CUDA out of memory. Tried to allocate 146.00 MiB (GPU 0; 15.78 GiB total capacity; 7.99 GiB already allocated; 146.75 MiB free; 14.43 GiB reserved in total by PyTorch)

but my model fits 3500G of video ram on my desktop and it obviously must fit V100 ram

Could you help with this issue, please?

s-abramov has not provided any information yet.

Evaluate predictions: Scoring failed

Over 4 years agoHi, @shivam.

Thank you very much!

I’ve replied (and answered additional questions:)) in the issue page.