Seismic Facies Identification Challenge

[Explainer]: Seismic Facies Identification Starter Pack

keras-image-segmentation package to produce a model, train it, and generate the final predictions.

Just a simple notebook

Hi there! I am a recently graduated geophysicist from Argentina. I got into Data Science and Machine Learning just a few months ago, so I’m certainly an inexperienced little tiny deep learning practitioner as you may well guess.

Here are some questions you may ask yourselves…

What is this challenge?

This challenge tries to overcome something that has troubled the Oil&Gas industry for so many years: how can I interpret such huge amount of information in so little time?

Seismic facies are one of the abstractions that are regularly used to compress the amount of information. We define that certain patterns in the seismic image are defined by the response of a certain layer, this layer, when grouped with several other layers with the same characteristics, tend to generate a response that stands out from other patterns in the seismic image.

This common response helps grouping these layers that behave similarly, and thus helping reducing the amount of information on image.

But how do we group all these patterns together?! Well, Deep Learning to the rescue!

Let’s dive in:

What did you do?

The last few hours I’ve tried to put together a simple notebook that goes from showing some simple seismic attributes, to implementing a deep learning model. I hope that if you are starting just like me you’ll find it useful. And if you are an already experienced machine learning practitioner, you might find some insights on how to improve your results!

A couple of examples from the notebook:

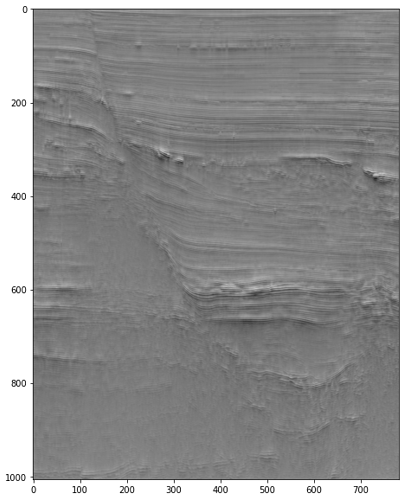

TECVA seismic attribute

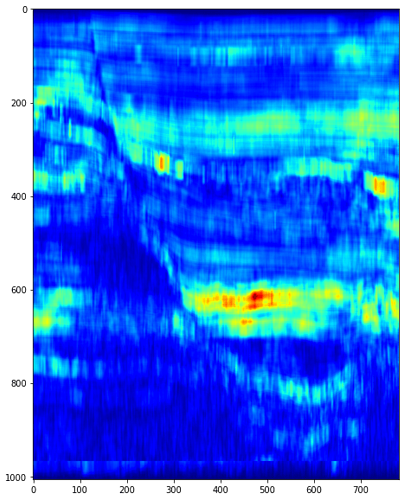

RMS seismic attribute

How did you do it?

I started by using my seismic attributes knowledge, then I tried to think of a way of implementing different kinds of information, and finally, I used the keras-image-segmentation package that you can find here: https://github.com/divamgupta/image-segmentation-keras to produce a model, train it, and generate the final predictions.

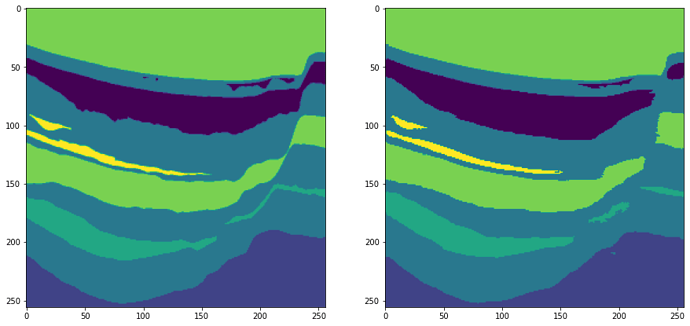

Sample image of the results of training the net (label set / trained set):

Well, but I could have done that on my own!..

Well, of course you could! But beware that I also try to give an insight on some facts over using this seismic dataset and how you may improve your results by taking this into account. Maybe it will help you!

I don’t really know about these seismic facies and stuff…

Well, maybe you just like to watch at some random guys’ notebook and you’ll probably like those nice images!

LINK TO THE NOTEBOOK: https://colab.research.google.com/drive/1935VS3tMKoJ1FbgR1AOkoAC2z0IO9HWr?usp=sharing

You can also get in touch via Linkedin (link to the post with this notebook)!:

Hope you guys find it useful, funny and maybe consider giving it a thumbs up!

See you around!

Just a simple notebook¶

Hi there! I am a recently graduated geophysicist from Argentina. I got into Data Science and Machine Learning just a few months ago, so I'm certainly an inexperienced little tiny deep learning practitioner as you may well guess. Here are some questions you may ask yourselves...

What did you do?¶

The last few hours I've tried to put together a simple notebook that goes from showing some simple seismic attributes, to implementing a deep learning model.

How did you do it?¶

I started by using my seismic attributes knowledge, then I tried to think of a way of implementing different kinds of information, and finally, I used the keras-image-segmentation package that you can find here: https://github.com/divamgupta/image-segmentation-keras to produce a model, train it, and test it.

Well, but I could have done that on my own!...¶

Well, of course you could! But beware that I also try to give an insight on some facts over using this seismic dataset and how you may improve your results by taking this into account. Maybe it will help you!

I don't really know about these seismic facies and stuff...¶

Well, maybe you just like to watch at some random guys' notebook and you'll probably like those nice images!

Hope you find it useful, funny and maybe consider giving it a thumbs up on the discourse!

Loading packages¶

This notebook uses keras_segmentation package to take advantage of other pre-trained models used in image segmentation.

It also uses:

- NumPy (duh..)

- matplotlib (duh..x2)

- cv2 (dux..x3)

- tensorflow (kind of duh..)

- scipy (wait... why?)

# Installing image_segmentation_keras

!pip install git+https://github.com/santiactis/image-segmentation-keras

# For data preprocessing & manipulation

import numpy as np

# FOr data visualisations & image processing

import matplotlib.pyplot as plt

import cv2

import scipy

# utilities

from tqdm.notebook import tqdm

import datetime

# For Deep learning

import tensorflow as tf

from tensorflow import keras

from keras_segmentation.models.unet import vgg_unet, resnet50_unet

from keras_segmentation.models.model_utils import get_segmentation_model

Loading the data¶

We download the datasets for the competition using !wget and their corresponding URLs

# Downloading training data

!wget https://datasets.aicrowd.com/default/aicrowd-public-datasets/seamai-facies-challenge/v0.1/public/data_train.npz

# Downloading training data

!wget https://datasets.aicrowd.com/default/aicrowd-public-datasets/seamai-facies-challenge/v0.1/public/labels_train.npz

# Downloading testing data

!wget https://datasets.aicrowd.com/default/aicrowd-public-datasets/seamai-facies-challenge/v0.1/public/data_test_1.npz

Don't look in here.......

#@title

import matplotlib.colors as mcolors

# Helpful functions

def hex_to_rgb(value):

'''

Converts hex to rgb colours

value: string of 6 characters representing a hex colour.

Returns: list length 3 of RGB values'''

value = value.strip("#") # removes hash symbol if present

lv = len(value)

return tuple(int(value[i:i + lv // 3], 16) for i in range(0, lv, lv // 3))

def rgb_to_dec(value):

'''

Converts rgb to decimal colours (i.e. divides each value by 256)

value: list (length 3) of RGB values

Returns: list (length 3) of decimal values'''

return [v/256 for v in value]

def get_continuous_cmap(hex_list, float_list=None):

''' creates and returns a color map that can be used in heat map figures.

If float_list is not provided, colour map graduates linearly between each color in hex_list.

If float_list is provided, each color in hex_list is mapped to the respective location in float_list.

Parameters

----------

hex_list: list of hex code strings

float_list: list of floats between 0 and 1, same length as hex_list. Must start with 0 and end with 1.

Returns

----------

colour map'''

rgb_list = [rgb_to_dec(hex_to_rgb(i)) for i in hex_list]

if float_list:

pass

else:

float_list = list(np.linspace(0,1,len(rgb_list)))

cdict = dict()

for num, col in enumerate(['red', 'green', 'blue']):

col_list = [[float_list[i], rgb_list[i][num], rgb_list[i][num]] for i in range(len(float_list))]

cdict[col] = col_list

cmp = mcolors.LinearSegmentedColormap('my_cmp', segmentdata=cdict, N=256)

return cmp

We've got to talk about Seismic (Attributes)¶

We are going to make a gentle and very visual introduction to seismic attributes. These are widely used in the Oil&Gas industry to aid Geophysicists and Geologist to find new prospects fast and easy. Of course that afterwards, each potential prospect is further analyzed to confirm if it is just an anomaly in the seismic image or in fact, a potential prospect.

Visualizing the seismic slice over Y=380¶

We are going to visualize one of our seismic sections over which we are going to calculate differente seismic attributes.

# Taking a seismic slice for calculating seismic attributes

data_seis = np.load('/content/data_train.npz',

allow_pickle=True, mmap_mode = 'r')['data'][:,:,380]

# Setting figure size

plt.rcParams["figure.figsize"] = (20, 10)

# We plot the slice

plt.imshow(data_seis, cmap='binary')

Instantaneous Attributes¶

They are defined by taking the analytic trace in consideration. This trace is obtained by taking the Hilbert transform over the real trace, and thus, obtaining the complex part of the analytic trace. By doing this, the seismic trace is composed by a real part (the real trace) and a complex part (its Hilbert transform.

This trace now allows us to calculate the so called 'Instantaneous Attributes'. These seismic attributes were of the first ever used when looking for DHI (Direct Hydrocarbon Indicators), generally by looking at the Envelope searching for amplitude anomalies.

The analytic trace $u(t)$ is defined as: $u(t) = x(t) + i y(t)$ , where $x(t)$ is the real trace and $y(t)$ is the Hilbert transform of $x(t)$ ( $H[x(t)]$ )

# We calculate the Hilbert transform for every trace in the slice to obtain the analytic traces

def calc_hil(data):

H = []

for i in range(data.shape[1]):

H.append(np.imag(scipy.signal.hilbert(data[:,i])))

H = np.asarray(H)

H = np.swapaxes(H, 0,1)

return H

H = calc_hil(data_seis)

# We plot the slice

plt.imshow(H, cmap='binary')

Ok, so at first glance this might seem the same thing... So let's go a little further

Envelope¶

This seismic attribute contributes information about the strength of a reflection, therefore giving information about "how different" are two geologic formations on the subsurface. The envelope is defined as: $E(t)=\sqrt{x^2 + y^2}$

# We calculate the Envelope

def calc_env(data):

env = data.copy()*0.0

for i in range(data.shape[1]):

for j in range(data.shape[0]):

env[j,i] = np.sqrt((data[j,i])**2 + (H[j,i])**2)

return env

env = calc_env(data_seis)

# We plot the slice

plt.imshow(env, cmap='jet')

Well... This looks a little bit more promising now, doesn't it? I can already assure you that some geophysicist or geologist are looking into those bright anomalies next to that main fault drooling over the potential fields... This could be enhanced visually by changing the middle values of the palette. Let's keep going...

Instantaneous Phase¶

This one is kind of a trickier one. It generally helps to define the edges of the reflectors and also helps on defining discontinuities. Its meaning it's a little difficult to define, some say that it has to do with the wave front, thus, the values that have a certain continuity correspond to the same wave front, and since we are looking at reflections, well, we could be seeing the same reflector if we follow a certain pattern. It is defined as: $ \theta = atan\left (\frac{x(t)}{y(t)} \right)$ . This generates some troubles over certain values, therefore it's useful to take the Cosine of that function, which is what I will do here.

# We calculate the Cosine of the Instantaneous Phase

def cos_ph(data):

ph = data.copy()*0.0

for i in range(data.shape[1]):

for j in range(data.shape[0]):

ph[j,i] = np.cos((data[j,i])/(H[j,i]))

return ph

ph = cos_ph(data_seis)

# We plot the slice

hex_list = ['#000000', '#ffffff', '#000000']

plt.imshow(ph, cmap=get_continuous_cmap(hex_list))

It seems difficult to see much, but if you look close enough you can see that we can define the main fault plane and differentiate the reflector packages. Maybe another palette would help?

Instantaneous Frequency¶

Well, if the last one was tricky, this one will rock your world. This seismic attribute is said to help with finding fracture zones when encountering low frequency anomalies, it is also said to help with defining tuning zones, and much many other things that are very difficult to prove (even though is one of the things I tried in my thesis). It is defined as: $\omega = \frac{d\theta}{dt}$ This now imposes a challenge because we might find anomalies that go beyond something you could explain with physics (negative frequencies), but we will not get into that, we're here for the fun!

# We calculate the Instantaneous Frequency

def calc_ifreq(data):

ifreq = data.copy()*0.0

for i in range(data.shape[1]):

for j in range(data.shape[0]):

if j==0:

ifreq[j,i] = 0

elif j==data.shape[0]:

ifreq[j,i] = 0

else:

ifreq[j,i] = (ph[j,i])-(ph[j-1,i])

return ifreq

ifreq = calc_ifreq(data_seis)

# We plot the slice

plt.imshow(ifreq, cmap='jet')

You could say that these last ones relatively similar! But wait, this is not over yet...

# We calculate the RMS Instantaneous Frequency

def calc_ifreq_rms(data, window):

ifreq_rms = data.copy()*0.0

for i in range(ifreq.shape[1]):

for j in range(data.shape[0]):

if j<window:

ifreq_rms[j,i] = (np.sum(np.sqrt((ifreq[0:j,i])**2)))/window

elif j>data.shape[0]-window:

ifreq_rms[j,i] = (np.sum(np.sqrt((ifreq[j:data.shape[0],i])**2)))/window

else:

ifreq_rms[j,i] = (np.sum(np.sqrt((ifreq[j-window:j,i])**2)))/window

return ifreq_rms

ifreq_rms = calc_ifreq_rms(data_seis, 5)

# We plot the slice

plt.imshow(ifreq_rms, cmap='jet')

That's more like it! Using a window and calculating the RMS over that window we get image, where we can see a lot of information that could be useful for training our net. For example, the main fault zone caan be defined, and we can even try to follow the behavior of certain reflectors packages over different parts of the image. I encourage you to play with the window size!

RMS Amplitude¶

Similarly to the Envelope, this seismic attribute only depends on the amplitude, and is obtained by calculating the sum over a certain window. We can define it as: $ A_{rms} = \sqrt{\frac {\sum_{i}^{N} A_{i}}{N}}$. This seismic attribute is widely used in the search for DHIs by observing at its anomalies. Its usefulness depends highly on the right choice of the window size.

def Amp_rms(data, window):

A_rms = data.copy()*0.0

for i in range(data.shape[1]):

for j in range(data.shape[0]):

if j<window:

A_rms[j,i] = (np.sum(np.sqrt((data[0:j,i])**2)))/window

elif j>data.shape[0]-window:

A_rms[j,i] = (np.sum(np.sqrt((data[j:data.shape[0],i])**2)))/window

else:

A_rms[j,i] = (np.sum(np.sqrt((data[j-window:j,i])**2)))/window

return A_rms

A_rms = Amp_rms(data_seis, 40)

# We plot the slice

plt.imshow(A_rms, cmap='jet')

I bet you can see why this seismic attribute was and still is widely used...

TECVA¶

While working on my thesis I came across a seismic attribute that enhances the reflector characteristics. Introduced by Bulhoes, it helps defining the characteristics of the reflectors by enhancing their geometry visually. I will not get into the math, but it's relatively straight forward!

def TECVA(data):

A_rms = Amp_rms(data,1)

TECVA = []

for i in range(A_rms.shape[1]):

TECVA.append(np.imag(scipy.signal.hilbert(A_rms[:,i])))

TECVA = np.asarray(TECVA)

TECVA = np.swapaxes(TECVA, 0,1)

return TECVA

tecva = TECVA(data_seis)

# We plot the slice

plt.imshow(tecva, cmap='binary')

I bet you liked that one, didn't you? Now, wouldn't it be cool if we could get some of these together?

fig, ax = plt.subplots()

ax.imshow(tecva, cmap='binary')

ax.imshow(A_rms, cmap='jet', alpha=0.075)

ax.imshow(ifreq_rms, cmap='jet',alpha=0.1)

Probably you are already getting tired of seismic attributes, so let's go into the Deep Learning stuff. Before that, I won't go without saying that these seismic attributes might help you in finding certain patterns that your already-better-than-mine can learn and take advantage of.

My implementation¶

I haven't ever worked on a project that uses deep learning, let alone in image processing, so the results on this notebooks are a clear example on how you can develop this knowledge from the bare ground. Let's dive into the datasets!

# We're first going to delete all those beautiful seismic attributes

del tecva, A_rms, ifreq_rms, ifreq, ph, env, H, data_seis

What does de data say?¶

*cue music from what does the fox say * 🦊

# We read our data into variables that we can inspect

seis_data = np.load('/content/data_train.npz',

allow_pickle=True, mmap_mode = 'r')

# We read our labels

labels = np.load("/content/labels_train.npz",

allow_pickle=True, mmap_mode = 'r')

# Inside the elements we pick what we are interesed in

X = seis_data['data']

Y = labels['labels']

# We print the shapes

print('X shape is: {}'.format(X.shape), 'and Y shape is: {}'.format(Y.shape))

If you remember from the Overview section, we have a seismic cube that is 1006 in z, by 782 in x and 590 in y, so this seems about right. Also, as we where expecting, the labels cube have the same dimensions. Let's see how they look like inside.

# We are going to take some random slices from z, x, y to see how they look like

imgx = X[:,380,:]

labx = Y[:,380,:]

imgy = X[:,:,380]

laby = Y[:,:,380]

imgz = X[500,:,:]

labz = Y[500,:,:]

# We plot them side by side

plt.rcParams["figure.figsize"] = (30, 20)

f, axarr = plt.subplots(3,2)

f.subplots_adjust(hspace=0.5, wspace=0)

axarr[0, 0].set_anchor('SE')

axarr[1, 0].set_anchor('NE')

axarr[0, 1].set_anchor('SW')

axarr[1, 1].set_anchor('NW')

axarr[2, 0].set_anchor('SE')

axarr[2, 1].set_anchor('SW')

axarr[0][0].imshow(imgx, cmap='binary')

plt.axis('off')

axarr[0][1].imshow(labx)

plt.axis('off')

axarr[1][0].imshow(imgy, cmap='binary')

plt.axis('off')

axarr[1][1].imshow(laby)

plt.axis('off')

axarr[2][0].imshow(imgz, cmap='binary')

plt.axis('off')

axarr[2][1].imshow(labz)

plt.axis('off')

B-A-utiful, we can see the main fault defined by the seismic facies, you can also make some assumptions over the nature of the fault, etc. But we don't really care about that, for now...

Important thing to note.¶

We can see from the previous images that there is a clear difference between looking at these images from the vertical slices compared to looking at them from the top.

This might be an important thing to notice, since the vertical slices contain a lot of "easy to look at" geologic information. As I am new to this world, my insight is that it might be important to consider how to deploy these images into the model.

It is my belief that you should select to train ONLY on pure vertical slices, or ONLY on pure plan view. This also goes into the testing stage, you should test on the same kind of images that you select for training!!

This is the tipical consideration of retaining the same processing for the train and the test sets.

What's on your mind? 🧠¶

The kind of problem that we are trying to attack is an image SEMANTIC segmentation solution. We need to classify every pixel on an image with a given value. Searching around the web I came across with a wonderfull package that allows for a clean pipeline to implement a deep learning model with very few steps.

And I didn't mention that you can even implement already built AND trained models!

This is the keras image segmentation package , which you can check out here: https://github.com/divamgupta/image-segmentation-keras . This package uses a loader to get all the images for training and testing, therefore you've got to get your files out. This helps releasing RAM memory, but also retains the fact that you're maybe generating thousands of low res images.

I took the liberty to modify this package and add a custom augmentation with some flips, scales and random crops to aid our model in learning new stuff.

I implemented a UNet style CNN with skipped connections, you could certainly build one that fits your needs best!

# This block allows you to import the net you would like to implement, tell it

# the sizes of your images and how many classes you'd like to get classified and

# just like that, you're in the game! I recommend checking out the git link to

# get more information on the available models

# Here we have to select the model, setting up how many classes we are going to use and the image size

#model_vgg = vgg_unet(n_classes=6 , input_height=256, input_width=256)

#model_resnet = resnet50_unet(n_classes=6 , input_height=256, input_width=256)

I implemented a model (Encoder/Decoder) with several convolutions on each stage and skipped connections.

Every steps also includes batch normalization I choose an imagesize of 1024 in height by 512 in width to retain information valuable in the z axis and keeping our images as large as possible.

img_input = keras.Input(shape=(1024, 512, 3 )) # We define how our input it's going to be

#ENCODER

conv1 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(img_input)

conv1 = keras.layers.BatchNormalization()(conv1)

conv1 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(conv1)

conv1 = keras.layers.BatchNormalization()(conv1)

pool1 = keras.layers.MaxPooling2D((2, 2))(conv1) # Downsampling

conv2 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(pool1)

conv2 = keras.layers.BatchNormalization()(conv2)

conv2 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(conv2)

conv2 = keras.layers.BatchNormalization()(conv2)

pool2 = keras.layers.MaxPooling2D((2, 2))(conv2) # Downsampling

conv3 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(pool2)

conv3 = keras.layers.BatchNormalization()(conv3)

conv3 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv3)

conv3 = keras.layers.BatchNormalization()(conv3)

conv3 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv3)

conv3 = keras.layers.BatchNormalization()(conv3)

pool3 = keras.layers.MaxPooling2D((2, 2))(conv3) # Downsampling

conv4 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(pool3)

conv4 = keras.layers.BatchNormalization()(conv4)

conv4 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv4)

conv4 = keras.layers.BatchNormalization()(conv4)

conv4 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv4)

conv4 = keras.layers.BatchNormalization()(conv4)

pool4 = keras.layers.MaxPooling2D((2, 2))(conv4) #Downsampling

# DECODER

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(pool4)

conv5 = keras.layers.BatchNormalization()(conv5)

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(conv5)

conv5 = keras.layers.BatchNormalization()(conv5)

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(conv5)

conv5 = keras.layers.BatchNormalization()(conv5)

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(conv5)

conv5 = keras.layers.BatchNormalization()(conv5)

up1 = keras.layers.UpSampling2D((2, 2))(conv5) # Upsampling

conv6 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(up1)

conv6 = keras.layers.BatchNormalization()(conv6)

conv6 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv6)

conv6 = keras.layers.BatchNormalization()(conv6)

conv6 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv6)

conv6 = keras.layers.BatchNormalization()(conv6)

up2 = keras.layers.concatenate([keras.layers.UpSampling2D((2, 2))(conv6), conv3], axis=-1) # Upsampling and skipping

conv7 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(up2)

conv7 = keras.layers.BatchNormalization()(conv7)

#conv7 = keras.layers.Dropout(0.2)(conv7)

conv7 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv7)

conv7 = keras.layers.BatchNormalization()(conv7)

conv7 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv7)

conv7 = keras.layers.BatchNormalization()(conv7)

up3 = keras.layers.UpSampling2D((2, 2))(conv7) # Upsampling

conv8 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(up3)

conv8 = keras.layers.BatchNormalization()(conv8)

conv8 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(conv8)

conv8 = keras.layers.BatchNormalization()(conv8)

up4 = keras.layers.concatenate([keras.layers.UpSampling2D((2, 2))(conv8), conv1], axis=-1) # Upsampling and skipping

conv9 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(up4)

conv9 = keras.layers.BatchNormalization()(conv9)

conv9 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(conv9)

conv9 = keras.layers.BatchNormalization()(conv9)

out = keras.layers.Conv2D(6, (1, 1) , padding='same')(conv9) # Getting the last layers with the 6 classes

model = get_segmentation_model(img_input , out ) # the function get will build the segmentation model

Why model first? and how I learned to set up 2D images¶

You could clearly ask that. Well, this just happened so we could get to know which size we should resize our seismic images to! Let's get into that.

I used several approaches. Many of them included using seismic attributes as part of the RGB channels, so I could fit more information into a single image. This sometimes worked, other times it didn't.

My final approach used the vertical slices, since I get more images for training (782 + 590 = 1372 against 1006 in the vertical axis). Changing the scales of the images should't trouble our net, but using interpolation if we downsize will, this is why we try to keep our images as large as possible

There is also something to take into account in the labels set: the range of labels in the given set goes from 1-6, we don't want that. Well, actually our net doesn't want that, so firstly we change all our 6s to 0s, remapping the labels. The keras image segmentation package has an option to ignore the 0 label altogether (which clearly helps and speeds things up if you're using padding)

Y[Y==6] = 0 #replacing the 6s with 0s bros

# We also create the folders for our files

!mkdir training_imgs

!mkdir training_labels

!mkdir testing_imgs

!mkdir output_imgs

!mkdir model

Let's generate our training images¶

for i in tqdm(range(0, X.shape[1])):

img = X[:, i, :]

label = Y[:, i, :]

img = np.expand_dims(img, axis=2).astype('float32')

label = np.expand_dims(label, axis=2).astype('float32')

img = cv2.normalize(img, None, 0, 255, cv2.NORM_MINMAX, cv2.CV_8U)

label = cv2.resize(label, (512,1024), interpolation = cv2.INTER_AREA)

img = cv2.resize(img, (512,1024), interpolation = cv2.INTER_AREA)

label = np.around(label)

label = label.astype(np.uint8)

cv2.imwrite('/content/training_imgs/image_x_%03d.png' % i, img)

cv2.imwrite('/content/training_labels/image_x_%03d.png' % i, label)

# Define the Y lines slices for training

for i in tqdm(range(0, X.shape[2])):

img = X[:, :, i]

label = Y[:, :, i]

img = np.expand_dims(img, axis=2).astype('float32')

label = np.expand_dims(label, axis=2).astype('float32')

img = cv2.normalize(img, None, 0, 255, cv2.NORM_MINMAX, cv2.CV_8U)

label = cv2.resize(label, (512,1024), interpolation = cv2.INTER_AREA)

img = cv2.resize(img, (512,1024), interpolation = cv2.INTER_AREA)

label = np.around(label)

label = label.astype(np.uint8)

cv2.imwrite('/content/training_imgs/image_y_%03d.png' % i, img)

cv2.imwrite('/content/training_labels/image_y_%03d.png' % i, label)

Let's see what we've got!

# Let's see what we've got!

# Reading images for comparison

x_300 = cv2.imread('/content/training_imgs/image_x_300.png')

x_300_l = cv2.imread('/content/training_labels/image_x_300.png', cv2.IMREAD_UNCHANGED)

y_300 = cv2.imread('/content/training_imgs/image_y_300.png')

y_300_l = cv2.imread('/content/training_labels/image_y_300.png', cv2.IMREAD_UNCHANGED)

# Ploting

plt.rcParams["figure.figsize"] = (20, 20)

plt.figure()

f, axarr = plt.subplots(2,1)

# Use the created array to output your multiple images. Take into account that because we used

# the RGB capabilities you we have to subselect the seismic image (or not...)

axarr[0].imshow(x_300[:,:,0], cmap='binary_r')

axarr[0].imshow(x_300_l, alpha=0.5)

axarr[1].imshow(y_300[:,:,0], cmap='binary_r')

axarr[1].imshow(y_300_l, alpha=0.5)

Setting up Training¶

Let's go back into your mind¶

Let's see a summary of our model and train it.

model.summary()

That's a big model we've got there, WHAT R THOSE! (probably killing a spider with a cannon, but don't ruin the fun).

Have I already said that this package is wonderful? Well, for training you just tell it where your training images and labels are, and away we go! I have taken the liberty to modify the package and add some very simple image augmentation.

# We train our model

model.train(train_images = "/content/training_imgs/", train_annotations = "/content/training_labels",

epochs=10, verify_dataset= False, do_augment=True, augmentation_name='aug_seismic', batch_size=4, steps_per_epoch=342)

This is surely overfitting. I didn't pulled out a validation set, I'll leave that to you, but there are several things you could add for this to enhance it... Watch until the end!

# We can even save the weights for later

model.save_weights("/content/model/model.h5")

# And even load the weights afterwards! Remember to build the same net please

#model_new = model_new.load_weights("path to weights")

#model_propio = get_segmentation_model(img_input , out ) # this would build the segmentation model again

What happens after the storm¶

By now you probably want to know how close your net got to the labels, so let's see.

#model_propio = get_segmentation_model(img_input , out ) # this would build the segmentation model

# We test our model against the training set

training_test = model.predict_segmentation(inp="/content/training_imgs/image_x_460.png", out_fname="/content/model/output.png")

label_test = cv2.imread("/content/training_labels/image_x_460.png", cv2.IMREAD_UNCHANGED)

plt.rcParams["figure.figsize"] = (15, 10)

f, axarr = plt.subplots(1,2)

axarr[0].imshow(label_test)

axarr[1].imshow(training_test)

Depending on how well we did, this could look similar or not... As we can see, we have something very similar, as expected. We could want to see if we got the right amount of labels...

print('The amount of labels on the training label set was: {}.'.format(np.unique(label_test)), 'The amount of labels on the predicted example is: {}'.format(np.unique(training_test)))

Testing the test for testing how well we'll test¶

So now that we've got our model trained, we'd like to get our fricking labels for something it hasn't seen before, don't we? We first need to load our test data

# We load the testing data

test_data = np.load("/content/data_test_1.npz",

allow_pickle=True, mmap_mode = 'r')['data']

Now we should pre-process our test data the same way we did with our test data. I took the X axis, do as you please by changing the .shape value. As I said, we have to pre-process the test data the same way we did with our training data, so this step will depend on the approach you chose before! Choose wisely...

# Using the first approach, use this

# Generating the test images in the X axis

for i in tqdm(range(test_data.shape[2])):

img = test_data[:, :, i]

img = np.expand_dims(img, axis=2).astype('float32')

img = cv2.normalize(img, None, 0, 255, cv2.NORM_MINMAX, cv2.CV_8U)

img = cv2.resize(img, (768,1024), interpolation = cv2.INTER_AREA)

cv2.imwrite('/content/testing_imgs/image_y_%03d.png' % i, img)

Rebuilding..¶

We are going to rebuild our net to fit our test images better, and append to it the weights from our training model.

img_input_test = keras.Input(shape=(1024, 768, 3 )) # We define how our input it's going to be

#ENCODER

conv1 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(img_input_test)

conv1 = keras.layers.BatchNormalization()(conv1)

conv1 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(conv1)

conv1 = keras.layers.BatchNormalization()(conv1)

pool1 = keras.layers.MaxPooling2D((2, 2))(conv1) # Downsampling

conv2 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(pool1)

conv2 = keras.layers.BatchNormalization()(conv2)

conv2 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(conv2)

conv2 = keras.layers.BatchNormalization()(conv2)

pool2 = keras.layers.MaxPooling2D((2, 2))(conv2) # Downsampling

conv3 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(pool2)

conv3 = keras.layers.BatchNormalization()(conv3)

conv3 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv3)

conv3 = keras.layers.BatchNormalization()(conv3)

conv3 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv3)

conv3 = keras.layers.BatchNormalization()(conv3)

pool3 = keras.layers.MaxPooling2D((2, 2))(conv3) # Downsampling

conv4 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(pool3)

conv4 = keras.layers.BatchNormalization()(conv4)

conv4 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv4)

conv4 = keras.layers.BatchNormalization()(conv4)

conv4 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv4)

conv4 = keras.layers.BatchNormalization()(conv4)

pool4 = keras.layers.MaxPooling2D((2, 2))(conv4) #Downsampling

# DECODER

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(pool4)

conv5 = keras.layers.BatchNormalization()(conv5)

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(conv5)

conv5 = keras.layers.BatchNormalization()(conv5)

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(conv5)

conv5 = keras.layers.BatchNormalization()(conv5)

conv5 = keras.layers.Conv2D(512, (3, 3), activation='relu', padding='same')(conv5)

conv5 = keras.layers.BatchNormalization()(conv5)

up1 = keras.layers.UpSampling2D((2, 2))(conv5) # Upsampling

conv6 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(up1)

conv6 = keras.layers.BatchNormalization()(conv6)

conv6 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv6)

conv6 = keras.layers.BatchNormalization()(conv6)

conv6 = keras.layers.Conv2D(256, (3, 3), activation='relu', padding='same')(conv6)

conv6 = keras.layers.BatchNormalization()(conv6)

up2 = keras.layers.concatenate([keras.layers.UpSampling2D((2, 2))(conv6), conv3], axis=-1) # Upsampling and skipping

conv7 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(up2)

conv7 = keras.layers.BatchNormalization()(conv7)

#conv7 = keras.layers.Dropout(0.2)(conv7)

conv7 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv7)

conv7 = keras.layers.BatchNormalization()(conv7)

conv7 = keras.layers.Conv2D(128, (3, 3), activation='relu', padding='same')(conv7)

conv7 = keras.layers.BatchNormalization()(conv7)

up3 = keras.layers.UpSampling2D((2, 2))(conv7) # Upsampling

conv8 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(up3)

conv8 = keras.layers.BatchNormalization()(conv8)

conv8 = keras.layers.Conv2D(64, (3, 3), activation='relu', padding='same')(conv8)

conv8 = keras.layers.BatchNormalization()(conv8)

up4 = keras.layers.concatenate([keras.layers.UpSampling2D((2, 2))(conv8), conv1], axis=-1) # Upsampling and skipping

conv9 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(up4)

conv9 = keras.layers.BatchNormalization()(conv9)

conv9 = keras.layers.Conv2D(32, (3, 3), activation='relu', padding='same')(conv9)

conv9 = keras.layers.BatchNormalization()(conv9)

out = keras.layers.Conv2D(6, (1, 1) , padding='same')(conv9) # Getting the last layers with the 6 classes

model_new = get_segmentation_model(img_input_test , out ) # the function get will build the segmentation model

model_new.set_weights(model.get_weights()) #setting the weights from the original model on the new model

Feed the monster 👹¶

We now give each generated test image to the model and append its result to a list so we can then generate the exit files.

# We create an empty list

test_labels = []

# We give the model each image and append its result to a list (take into account that it generates output images)

for i in tqdm(range(test_data.shape[2])):

out = model_new.predict_segmentation(inp="/content/testing_imgs/image_y_%03d.png" % i)

test_labels.append(out)

We'd like to check out some of the results, so we convert to an array and plot what we got for a random value of X (take into account that our images are given by a slice (y,z)).

# Convert to an array

test_labels = np.asarray(test_labels)

# We resize a test data image

test_image = cv2.resize(test_data[:,:,200], (768,1024), interpolation = cv2.INTER_AREA)

# Plot the seismic and the labels predicted

plt.rcParams["figure.figsize"] = (30, 20)

f, axarr = plt.subplots(1,2)

axarr[0].imshow(test_image, cmap='binary')

axarr[1].imshow(test_labels[200,:,:])

Does it look good? This is the time where you could say if this makes sense... I am sure you can do better!

Are we done yet?¶

Now we need to resize our predicted labels images to their original size and get the right shapes for the output predictions.

# We define a function to resize our data

def reshaping(data, out_shape):

output_labels = []

for i in data:

img = i.astype('float32')

img = cv2.resize(img, out_shape)

output_labels.append(img)

output_labels = np.asarray(output_labels)

output_labels = np.around(output_labels) # We round the values (to deal with the interpolation made)

output_labels = output_labels.astype(np.uint8) # We change everything to ints

return np.swapaxes(output_labels,0,1) # We need this since we changed the order of the axis

# We resize with the given function

test_labels = reshaping(test_labels, (782,1006)) # Beware that the size argument depends on which axis you chose to test

We're not done yet! Remember we changed our 6s to 0s, let's undo that!

# We change the label 0 to 6

test_labels[test_labels == 0] = 6

# We do a sanity check on the shape and the unique labels

print(test_labels.shape, np.unique(test_labels))

Woops! Those shapes don't look right! This is probably an artifact of the reshaping function, let's fix that. We could also do the swap inside the function to get the correct shape in one step.

test_labels = np.swapaxes(test_labels,1,2)

# We do a sanity check on the shape and the unique labels

print(test_labels.shape, np.unique(test_labels))

Just let me go watch the sky please!¶

And finally we save our predictions!

# We save the predictions

# Saving the Predictions

np.savez_compressed(

"/content/prediction.npz",

prediction=test_labels

)

Ok, ok, now you can go enjoy nature, beware of the facies that might be below you!

Just kidding, there's still something left!¶

As I said before, this example clearly overfits to the training set. There's still lots of things to do:

You could build a model that accepts more than 3 channels (beware that the keras-image-segmentation package does not currently support this AFAIK).

You could try some image augmentation over the training set. This is a tricky one, I wouldn't recommend using filters that smooth the image. I recommed fliping images horizontally, but not vertically, since the disposition of the reflectors should remain with at leas some geologic meaning (keras-image-segmentation provides a package to do this, check it out!).

You could try taking patches at each seismic slice (this might require some heavy lifting on the memory department). By choosing small parts of the seismic image (such as 64x64 patches) on each slide you'll end up with A TON of new information and even more detailed one, this should help get better results.

Use combinations of seismic attributes: you could try scaling between seismic attributes to get some results.

Try waveform classification: AHA! We didn't actually talked about the fact that seismic information can be reduced to a 1D array that actually holds the information that helps classifying the seismic facies. The waveform corresponds to the seismic response of each interfase, therefore it could be use to enhance the classification. Although this would chance our approach on using 2D images. Give it a shot!

Try some already trained model: use the prettiness of the keras-image-segmentation package to take advantage of some very well known models around.

OK, THAT'S IT, YOU'RE A FREE ELF DOBBIE! 🧦¶

Content

Comments

You must login before you can post a comment.

Hi there!

The notebook has been updated, its END-TO-END solution throws an F1-score of .555 and an accuracy of .787 aproximately.

Of course this could be enhanced, but keep in mind that the objective of this notebook is to explain the process in an image segmentation problem and to keep the reader on topic on what it’s being done!

Hope some of you find it useful! Don’t forget to like this topic!

Colab Link