Learning to Smell

Where to start? 5 ways to learn 2 smell!

We have written a notebook that explores 5 ways to attempt this challenge.

Hi everyone!

@rohitmidha23 and me are undergrad students studying computer science, and found this challenge particularly interesting to explore the applications of ML in Chemistry. We have written a notebook that explores 5 ways to attempt this challenge. It includes baselines for

- ChemBERTa

- Graph Conv Networks

- MultiTaskClassifier using Molecular Fingerprints

- Sklearn Classifiers (Random Forest etc.) using Molecular Fingerprints

- Chemception (2D representation of molecules)

Check it out @ https://colab.research.google.com/drive/1-RedHEQSAVKUowOx2p-QoKthxayRshUa?usp=sharing

The most difficult task in this challenge is trying to get good representations of SMILES that is understandable for ML algorithms and we have tried to give examples on how that has been done in the past for these kind of tasks.

We hope that this notebook helps out other beginners like ourselves.

As always we are open to any feedback, suggestions and criticism!

If you found our work helpful, do drop us a  !

!

AICrowd Learning To Smell Challenge

What is the challenge exactly?¶

This challenge is all about the ability to be able to predict the different smells associate with a molecule. The information based upon which we are supposed to predict the smell is the smile of a molecule. Each molecule is labelled with multiple smells, with the total number of distinct smells being 109.

What is a smile?¶

SMILES (Simplified Molecular Input Line Entry System) is a chemical notation that allows a user to represent a chemical structure in a way that can be used by the computer. They describe the structure of chemical species using short ASCII strings.

What is the most important task in this challenge?¶

This most important task at hand here is gaining a meaningful representation of each smile. There are several ways to do this, and this notebook attempts to give you quite a few pathways to gain a representation of a smile that can then be used in an ML pipeline. The different ways discussed here are:

- Tokenizing of Smiles and using ChemBERTA

- Graph Conv

- Molecular Fingerprints

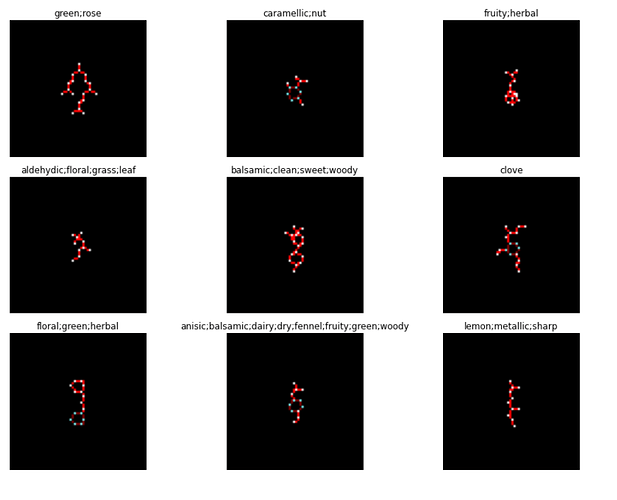

- 2D representation of molecules (Chemception)

Download the Data¶

!gdown --id 1t5be8KLHOz3YuSmiiPQjopb4c_q2U4tG

!unzip olfactorydata.zip

#thanks mmi333

Downloading... From: https://drive.google.com/uc?id=1t5be8KLHOz3YuSmiiPQjopb4c_q2U4tG To: /content/olfactorydata.zip 100% 94.3k/94.3k [00:00<00:00, 36.0MB/s] Archive: olfactorydata.zip inflating: train.csv inflating: test.csv inflating: sample_submission.csv inflating: vocabulary.txt

!mkdir data

!mv train.csv data

!mv test.csv data

!mv vocabulary.txt data

!mv sample_submission.csv data

Install reqd Libraries¶

import sys

import os

import requests

import subprocess

import shutil

from logging import getLogger, StreamHandler, INFO

logger = getLogger(__name__)

logger.addHandler(StreamHandler())

logger.setLevel(INFO)

def install(

chunk_size=4096,

file_name="Miniconda3-latest-Linux-x86_64.sh",

url_base="https://repo.continuum.io/miniconda/",

conda_path=os.path.expanduser(os.path.join("~", "miniconda")),

rdkit_version=None,

add_python_path=True,

force=False):

"""install rdkit from miniconda

```

import rdkit_installer

rdkit_installer.install()

```

"""

python_path = os.path.join(

conda_path,

"lib",

"python{0}.{1}".format(*sys.version_info),

"site-packages",

)

if add_python_path and python_path not in sys.path:

logger.info("add {} to PYTHONPATH".format(python_path))

sys.path.append(python_path)

if os.path.isdir(os.path.join(python_path, "rdkit")):

logger.info("rdkit is already installed")

if not force:

return

logger.info("force re-install")

url = url_base + file_name

python_version = "{0}.{1}.{2}".format(*sys.version_info)

logger.info("python version: {}".format(python_version))

if os.path.isdir(conda_path):

logger.warning("remove current miniconda")

shutil.rmtree(conda_path)

elif os.path.isfile(conda_path):

logger.warning("remove {}".format(conda_path))

os.remove(conda_path)

logger.info('fetching installer from {}'.format(url))

res = requests.get(url, stream=True)

res.raise_for_status()

with open(file_name, 'wb') as f:

for chunk in res.iter_content(chunk_size):

f.write(chunk)

logger.info('done')

logger.info('installing miniconda to {}'.format(conda_path))

subprocess.check_call(["bash", file_name, "-b", "-p", conda_path])

logger.info('done')

logger.info("installing rdkit")

subprocess.check_call([

os.path.join(conda_path, "bin", "conda"),

"install",

"--yes",

"-c", "rdkit",

"python=={}".format(python_version),

"rdkit" if rdkit_version is None else "rdkit=={}".format(rdkit_version)])

logger.info("done")

import rdkit

logger.info("rdkit-{} installation finished!".format(rdkit.__version__))

install()

add /root/miniconda/lib/python3.6/site-packages to PYTHONPATH python version: 3.6.9 fetching installer from https://repo.continuum.io/miniconda/Miniconda3-latest-Linux-x86_64.sh done installing miniconda to /root/miniconda done installing rdkit done rdkit-2020.09.1 installation finished!

!pip install -q transformers

!pip install -q simpletransformers

# !pip install wandb #Uncomment if you want to use wandb

|████████████████████████████████| 1.3MB 7.7MB/s

|████████████████████████████████| 2.9MB 54.7MB/s

|████████████████████████████████| 890kB 42.7MB/s

|████████████████████████████████| 1.1MB 42.4MB/s

Building wheel for sacremoses (setup.py) ... done

|████████████████████████████████| 215kB 9.0MB/s

|████████████████████████████████| 1.7MB 17.7MB/s

|████████████████████████████████| 51kB 7.3MB/s

|████████████████████████████████| 7.4MB 55.7MB/s

|████████████████████████████████| 71kB 10.6MB/s

|████████████████████████████████| 317kB 51.5MB/s

|████████████████████████████████| 163kB 50.3MB/s

|████████████████████████████████| 122kB 56.3MB/s

|████████████████████████████████| 102kB 14.7MB/s

|████████████████████████████████| 102kB 13.2MB/s

|████████████████████████████████| 6.7MB 46.1MB/s

|████████████████████████████████| 112kB 59.9MB/s

|████████████████████████████████| 4.4MB 46.3MB/s

|████████████████████████████████| 133kB 54.8MB/s

|████████████████████████████████| 71kB 10.4MB/s

|████████████████████████████████| 122kB 52.9MB/s

|████████████████████████████████| 71kB 10.3MB/s

Building wheel for seqeval (setup.py) ... done

Building wheel for watchdog (setup.py) ... done

Building wheel for subprocess32 (setup.py) ... done

Building wheel for blinker (setup.py) ... done

Building wheel for pathtools (setup.py) ... done

ERROR: google-colab 1.0.0 has requirement ipykernel~=4.10, but you'll have ipykernel 5.3.4 which is incompatible.

ERROR: seqeval 1.2.1 has requirement numpy==1.19.2, but you'll have numpy 1.18.5 which is incompatible.

ERROR: seqeval 1.2.1 has requirement scikit-learn==0.23.2, but you'll have scikit-learn 0.22.2.post1 which is incompatible.

ERROR: botocore 1.19.2 has requirement urllib3<1.26,>=1.25.4; python_version != "3.4", but you'll have urllib3 1.24.3 which is incompatible.

ChemBerta¶

ChemBERTa ia a collection of BERT-like models applied to chemical SMILES data for drug design, chemical modelling, and property prediction. We finetune this existing model to use it for our application.

First we visualize the attention head using the bert-viz library, we can use this tool to see if the model infact understands the smiles it is processing.

We will be using the tokenizer that was pretrained, if we trained our own tokenizer the results would probably be better.

I plan on implementing this soon, but I have included a link in the References section of this notebook, if you want to have a crack at this.

%%javascript

require.config({

paths: {

d3: '//cdnjs.cloudflare.com/ajax/libs/d3/3.4.8/d3.min',

jquery: '//ajax.googleapis.com/ajax/libs/jquery/2.0.0/jquery.min',

}

});

def call_html():

import IPython

display(IPython.core.display.HTML('''

<script src="/static/components/requirejs/require.js"></script>

<script>

requirejs.config({

paths: {

base: '/static/base',

"d3": "https://cdnjs.cloudflare.com/ajax/libs/d3/3.5.8/d3.min",

jquery: '//ajax.googleapis.com/ajax/libs/jquery/2.0.0/jquery.min',

},

});

</script>

'''))

Lets load the train data and have a look at a few molecules that have the same label and pass them to the pretrained roberta model(trained on the zinc 250k dataset).

import pandas as pd

import numpy as np

train_df = pd.read_csv("data/train.csv")

train_df.head()

| SMILES | SENTENCE | |

|---|---|---|

| 0 | C/C=C/C(=O)C1CCC(C=C1C)(C)C | fruity,rose |

| 1 | COC(=O)OC | fresh,ethereal,fruity |

| 2 | Cc1cc2c([nH]1)cccc2 | resinous,animalic |

| 3 | C1CCCCCCCC(=O)CCCCCCC1 | powdery,musk,animalic |

| 4 | CC(CC(=O)OC1CC2C(C1(C)CC2)(C)C)C | coniferous,camphor,fruity |

train_df.loc[train_df["SENTENCE"]=="resinous,animalic"]

| SMILES | SENTENCE | |

|---|---|---|

| 2 | Cc1cc2c([nH]1)cccc2 | resinous,animalic |

| 1108 | Cc1nc2c(o1)cccc2 | resinous,animalic |

| 3183 | Cc1ccc2c(n1)cccc2 | resinous,animalic |

import torch

import rdkit

import rdkit.Chem as Chem

from rdkit.Chem import rdFMCS

from matplotlib import colors

from rdkit.Chem import Draw

from rdkit.Chem.Draw import MolToImage

m = Chem.MolFromSmiles('Cc1nc2c(o1)cccc2')

fig = Draw.MolToMPL(m, size=(200, 200))

m = Chem.MolFromSmiles('Cc1ccc2c(n1)cccc2')

fig = Draw.MolToMPL(m, size=(200,200))

!git clone https://github.com/jessevig/bertviz.git

import sys

sys.path.append("bertviz")

from transformers import RobertaModel, RobertaTokenizer

from bertviz import head_view

model_version = 'seyonec/ChemBERTa_zinc250k_v2_40k'

model = RobertaModel.from_pretrained(model_version, output_attentions=True)

tokenizer = RobertaTokenizer.from_pretrained(model_version)

sentence_a = "Cc1cc2c([nH]1)cccc2"

sentence_b = "Cc1ccc2c(n1)cccc2"

inputs = tokenizer.encode_plus(sentence_a, sentence_b, return_tensors='pt', add_special_tokens=True)

input_ids = inputs['input_ids']

attention = model(input_ids)[-1]

input_id_list = input_ids[0].tolist() # Batch index 0

tokens = tokenizer.convert_ids_to_tokens(input_id_list)

call_html()

head_view(attention, tokens)

This is a pretty cool visualization of the attention head, please do explore the bert-viz library to have a look at some similar visualisation, i.e the model view and the nueron view!

Now we finetune ChemBerta for our application!¶

For this we will be using the simple transformers library, ofcourse we might get better results if we do some hyperparameter tuning, but for a baseline let us assume the default params.

from simpletransformers.classification import MultiLabelClassificationModel

import pandas as pd

import logging

# Uncomment for logging

# logging.basicConfig(level=logging.INFO)

# transformers_logger = logging.getLogger("transformers")

# transformers_logger.setLevel(logging.WARNING)

model = MultiLabelClassificationModel('roberta', 'seyonec/ChemBERTa_zinc250k_v2_40k',num_labels=109, args={'num_train_epochs': 10, 'auto_weights': True,'reprocess_input_data': True, 'overwrite_output_dir': True,'use_cuda':True,}) #'wandb_project': 'l2s'}) Use wandb if you want by uncommenting

# You can set class weights by using the optional weight argument

Some weights of the model checkpoint at seyonec/ChemBERTa_zinc250k_v2_40k were not used when initializing RobertaForMultiLabelSequenceClassification: ['lm_head.bias', 'lm_head.dense.weight', 'lm_head.dense.bias', 'lm_head.layer_norm.weight', 'lm_head.layer_norm.bias', 'lm_head.decoder.weight', 'lm_head.decoder.bias'] - This IS expected if you are initializing RobertaForMultiLabelSequenceClassification from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPretraining model). - This IS NOT expected if you are initializing RobertaForMultiLabelSequenceClassification from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model). Some weights of RobertaForMultiLabelSequenceClassification were not initialized from the model checkpoint at seyonec/ChemBERTa_zinc250k_v2_40k and are newly initialized: ['classifier.dense.weight', 'classifier.dense.bias', 'classifier.out_proj.weight', 'classifier.out_proj.bias'] You should probably TRAIN this model on a down-stream task to be able to use it for predictions and inference.

with open("/content/data/vocabulary.txt") as f:

vocab = f.read().split('\n')

def get_ohe_label(sentence):

sentence = sentence.split(',')

ohe_sent = len(vocab)*[0]

for i,x in enumerate(vocab):

if x in sentence:

ohe_sent[i] = 1

return ohe_sent

labels = []

for x in train_df.SENTENCE.tolist():

labels.append(get_ohe_label(x))

data_df = pd.DataFrame(train_df["SMILES"].tolist(),columns=["text"])# pd.DataFrame(labels,columns = vocab)

data_df["labels"]= labels

data_df.head()

| text | labels | |

|---|---|---|

| 0 | C/C=C/C(=O)C1CCC(C=C1C)(C)C | [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, ... |

| 1 | COC(=O)OC | [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, ... |

| 2 | Cc1cc2c([nH]1)cccc2 | [0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, ... |

| 3 | C1CCCCCCCC(=O)CCCCCCC1 | [0, 0, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0, 0, 0, ... |

| 4 | CC(CC(=O)OC1CC2C(C1(C)CC2)(C)C)C | [0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, ... |

# Split the train and test dataset 80-20

train_size = 0.9

train_dataset=data_df.sample(frac=train_size,random_state=42).reset_index(drop=True)

test_dataset=data_df.drop(train_dataset.index).reset_index(drop=True)

# check if our train and evaluation dataframes are setup properly. There should only be two columns for the SMILES string and its corresponding label.

print("FULL Dataset: {}".format(data_df.shape))

print("TRAIN Dataset: {}".format(train_dataset.shape))

print("TEST Dataset: {}".format(test_dataset.shape))

FULL Dataset: (4316, 2) TRAIN Dataset: (3884, 2) TEST Dataset: (432, 2)

# !wandb login ## Log into wandb if you want to keep an eye on how your model is training

!rm -rf outputs

Time to Train!¶

model.train_model(train_dataset)

/usr/local/lib/python3.6/dist-packages/torch/optim/lr_scheduler.py:231: UserWarning: To get the last learning rate computed by the scheduler, please use `get_last_lr()`.

warnings.warn("To get the last learning rate computed by the scheduler, "

/usr/local/lib/python3.6/dist-packages/torch/optim/lr_scheduler.py:200: UserWarning: Please also save or load the state of the optimzer when saving or loading the scheduler. warnings.warn(SAVE_STATE_WARNING, UserWarning)

Exception ignored in: <bound method _MultiProcessingDataLoaderIter.__del__ of <torch.utils.data.dataloader._MultiProcessingDataLoaderIter object at 0x7f473bc74e48>>

Traceback (most recent call last):

File "/usr/local/lib/python3.6/dist-packages/torch/utils/data/dataloader.py", line 1101, in __del__

self._shutdown_workers()

File "/usr/local/lib/python3.6/dist-packages/torch/utils/data/dataloader.py", line 1075, in _shutdown_workers

w.join(timeout=_utils.MP_STATUS_CHECK_INTERVAL)

File "/usr/lib/python3.6/multiprocessing/process.py", line 122, in join

assert self._parent_pid == os.getpid(), 'can only join a child process'

AssertionError: can only join a child process

(4860, 0.11029124964534501)

import sklearn

result, model_outputs, wrong_predictions = model.eval_model(test_dataset)

print(result)

print(model_outputs)

{'LRAP': 0.48465526156536565, 'eval_loss': 0.0850957441661093}

[[0.00508118 0.00619125 0.01182556 ... 0.0051384 0.01132965 0.03271484]

[0.0078125 0.00818634 0.05340576 ... 0.00867462 0.00963593 0.025177 ]

[0.00346947 0.01490021 0.00288963 ... 0.00933838 0.006073 0.22290039]

...

[0.00340271 0.01069641 0.00712204 ... 0.00698471 0.00468063 0.04803467]

[0.00547028 0.0050621 0.01963806 ... 0.00720596 0.00772476 0.03271484]

[0.00400543 0.02442932 0.00223351 ... 0.00769424 0.00413513 0.58496094]]

Generate the test predictions¶

test_df = pd.read_csv("/content/data/test.csv")

test_df.head()

| SMILES | |

|---|---|

| 0 | CCC(C)C(=O)OC1CC2CCC1(C)C2(C)C |

| 1 | CC(C)C1CCC(C)CC1OC(=O)CC(C)O |

| 2 | CC(=O)/C=C/C1=CCC[C@H](C1(C)C)C |

| 3 | CC(=O)OCC(COC(=O)C)OC(=O)C |

| 4 | CCCCCCCC(=O)OC/C=C(/CCC=C(C)C)\C |

predictions, raw_outputs = model.predict(test_df["SMILES"].tolist())

from tqdm.notebook import tqdm

final_preds=[]

for i,row in tqdm(test_df.iterrows(),total=len(test_df)):

#predictions, raw_outputs = model.predict([row["SMILES"]])

order = np.argsort(raw_outputs[i])[::-1][:15]

labelled_preds = [vocab[i] for i in order]

for x in labelled_preds:

assert x in vocab

sents = []

for sent in range(0,15,3):

sents.append(",".join([x for x in labelled_preds[sent:sent+3]]))

pred = ";".join([x for x in sents])

final_preds.append(pred)

print(len(final_preds),len(test_df))

1079 1079

final = pd.DataFrame({"SMILES":test_df.SMILES.tolist(),"PREDICTIONS":final_preds})

final.head()

| SMILES | PREDICTIONS | |

|---|---|---|

| 0 | CCC(C)C(=O)OC1CC2CCC1(C)C2(C)C | camphor,resinous,fruity;woody,earthy,coniferou... |

| 1 | CC(C)C1CCC(C)CC1OC(=O)CC(C)O | fruity,sweet,mint;woody,herbal,green;spicy,van... |

| 2 | CC(=O)/C=C/C1=CCC[C@H](C1(C)C)C | woody,fruity,floral;herbal,resinous,fresh;bals... |

| 3 | CC(=O)OCC(COC(=O)C)OC(=O)C | fruity,apple,sweet;ethereal,fresh,burnt;herbal... |

| 4 | CCCCCCCC(=O)OC/C=C(/CCC=C(C)C)\C | fruity,oily,fresh;floral,herbal,fatty;citrus,g... |

final.tail()

| SMILES | PREDICTIONS | |

|---|---|---|

| 1074 | CC(=CCCC(C)CC=O)C | citrus,floral,fresh;aldehydic,lemon,green;herb... |

| 1075 | CC(=O)c1ccc(c(c1)OC)O | sweet,spicy,phenolic;floral,vanilla,woody;resi... |

| 1076 | C[C@@H]1CC[C@H]2[C@@H]1C1[C@H](C1(C)C)CC[C@]2(C)O | woody,camphor,earthy;herbal,resinous,musk;gree... |

| 1077 | C=C1C=CCC(C)(C)C21CCC(C)O2 | woody,floral,green;herbal,sweet,rose;balsamic,... |

| 1078 | CCC/C=C/C(OC)OC | fruity,green,herbal;apple,fresh,ethereal;sweet... |

final.to_csv("submission_chemberta.csv",index=False)

This gives a score of ~0.29 on the leaderboard.

Using Graph Networks¶

deepchem is an amazing library that provides a high quality open-source toolchain that democratizes the use of deep-learning in drug discovery, materials science, quantum chemistry, and biology.

We will be using deepchem to make our GraphConvModel. Molecules naturally lend themselves to being viewed as graphs. Graph Convolutions are one of the most powerful deep learning tools for working with molecular data.

Graph convolutions are similar to CNNs that are used to process images, but they operate on a graph. They begin with a data vector for each node of the graph (for example, the chemical properties of the atom that node represents). Convolutional and pooling layers combine information from connected nodes (for example, atoms that are bonded to each other) to produce a new data vector for each node.

!pip install --pre deepchem

Collecting deepchem

Downloading https://files.pythonhosted.org/packages/14/c2/76c72bd5cdde182a6516bb40aaa0eb6322e89afabc12d1f713b53d1f732d/deepchem-2.4.0rc1.dev20201021184017.tar.gz (397kB)

|████████████████████████████████| 399kB 11.9MB/s

Requirement already satisfied: joblib in /usr/local/lib/python3.6/dist-packages (from deepchem) (0.16.0)

Requirement already satisfied: numpy in /usr/local/lib/python3.6/dist-packages (from deepchem) (1.18.5)

Requirement already satisfied: pandas in /usr/local/lib/python3.6/dist-packages (from deepchem) (1.1.2)

Requirement already satisfied: scikit-learn in /usr/local/lib/python3.6/dist-packages (from deepchem) (0.22.2.post1)

Requirement already satisfied: scipy in /usr/local/lib/python3.6/dist-packages (from deepchem) (1.4.1)

Requirement already satisfied: python-dateutil>=2.7.3 in /usr/local/lib/python3.6/dist-packages (from pandas->deepchem) (2.8.1)

Requirement already satisfied: pytz>=2017.2 in /usr/local/lib/python3.6/dist-packages (from pandas->deepchem) (2018.9)

Requirement already satisfied: six>=1.5 in /usr/local/lib/python3.6/dist-packages (from python-dateutil>=2.7.3->pandas->deepchem) (1.15.0)

Building wheels for collected packages: deepchem

Building wheel for deepchem (setup.py) ... done

Created wheel for deepchem: filename=deepchem-2.4.0rc1.dev20201022163145-cp36-none-any.whl size=502778 sha256=15d5e46cda835e8b579932f827986f789ca962fbbcf9b853b043ca4961f84a1d

Stored in directory: /root/.cache/pip/wheels/4b/ef/ce/c166ee776d4fcc0cd5e586887dcab72f9fc990b5b5f29fccea

Successfully built deepchem

Installing collected packages: deepchem

Successfully installed deepchem-2.4.0rc1.dev20201022163145

The following code generated the top15 smells present in the dataset we will be using these smells to pad the results our model predicts, since the submission requires us to submit 5 sentences each of 3 smells each.

from sklearn.preprocessing import MultiLabelBinarizer

def make_sentence_list(sent):

return sent.split(",")

train_df = pd.read_csv("/content/data/train.csv")

train_df["SENTENCE_LIST"] = train_df.SENTENCE.apply(make_sentence_list)

multilabel_binarizer = MultiLabelBinarizer()

multilabel_binarizer.fit(train_df.SENTENCE_LIST)

Y = multilabel_binarizer.transform(train_df.SENTENCE_LIST)

d = {}

for x,y in zip(multilabel_binarizer.classes_,Y.sum(axis=0)):

d[x]=y

d = sorted(d.items(), key=lambda x: x[1], reverse=True)

top_15 = [x[0] for x in d[:20]]

top_15

['fruity', 'floral', 'woody', 'herbal', 'green', 'fresh', 'sweet', 'resinous', 'spicy', 'balsamic', 'rose', 'earthy', 'ethereal', 'citrus', 'oily', 'mint', 'tropicalfruit', 'fatty', 'nut', 'camphor']

The GraphConvModel accepts the output of the ConvMolFeaturizer. To get the complete model cheatsheet click here. We thus preprocess our smiles using this Featurizer.

import deepchem as dc

mols = [Chem.MolFromSmiles(smile) for smile in data_df["text"].tolist()]

feat = dc.feat.ConvMolFeaturizer()

arr = feat.featurize(mols)

print(arr.shape)

wandb: WARNING W&B installed but not logged in. Run `wandb login` or set the WANDB_API_KEY env variable. wandb: WARNING W&B installed but not logged in. Run `wandb login` or set the WANDB_API_KEY env variable.

(4316,)

labels = []

train_df = pd.read_csv("data/train.csv")

for x in train_df.SENTENCE.tolist():

labels.append(np.array(get_ohe_label(x)))

labels = np.array(labels)

print(labels.shape)

(4316, 109)

Create a Train and Validation set¶

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(arr,labels, test_size=.1, random_state=42)

train_dataset = dc.data.NumpyDataset(X=X_train, y=y_train)

val_dataset = dc.data.NumpyDataset(X=X_test, y=y_test)

print(train_dataset)

<NumpyDataset X.shape: (3884,), y.shape: (3884, 109), w.shape: (3884, 1), task_names: [ 0 1 2 ... 106 107 108]>

print(val_dataset)

<NumpyDataset X.shape: (432,), y.shape: (432, 109), w.shape: (432, 1), ids: [0 1 2 ... 429 430 431], task_names: [ 0 1 2 ... 106 107 108]>

model = dc.models.GraphConvModel(n_tasks=109, mode='classification',dropout=0.2)

model.fit(train_dataset, nb_epoch=110)

/usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/indexed_slices.py:432: UserWarning: Converting sparse IndexedSlices to a dense Tensor of unknown shape. This may consume a large amount of memory. "Converting sparse IndexedSlices to a dense Tensor of unknown shape. "

0.07197612126668294

The metric we will use to evaluate our model will be jaccard score, similar to the one used in the competition

metric = dc.metrics.Metric(dc.metrics.jaccard_score)

print('training set score:', model.evaluate(train_dataset, [metric]))

training set score: {'jaccard_score': 0.3169358218239942}

The following is the auc roc score for each of the 109 classes.

y_true = val_dataset.y

y_pred = model.predict(val_dataset)

metric = dc.metrics.roc_auc_score

for i in range(109):

try:

for gt,prediction in zip(y_true[:,i],y_pred[:,i]):

assert round(prediction[0]+prediction[1])==1,prediction[0]-prediction[1]

score = metric(dc.metrics.to_one_hot(y_true[:,i]), y_pred[:,i])

print(vocab[i], score)

except:

print("err")

alcoholic 0.9597902097902098 aldehydic 0.7968215994531784 alliaceous 0.9701421800947867 almond 0.980327868852459 ambergris 0.9151162790697674 ambery 0.8611374407582939 ambrette 0.9604651162790698 ammoniac 0.9930394431554523 animalic 0.7099206349206348 anisic 0.6938616938616939 apple 0.8399680255795363 balsamic 0.7959270984854967 banana 0.984375 berry 0.7504396482813749 blackcurrant 0.8998247663551402 err body 0.8761682242990655 bread 0.9410046728971964 burnt 0.8518450523223793 butter 0.7444964871194379 cacao 0.9045667447306791 camphor 0.9093196314670446 caramellic 0.9230769230769231 cedar 0.732903981264637 cheese 0.8755668322176635 chemical 0.7866715623278868 cherry 0.9867909867909869 cinnamon 0.9945609945609946 citrus 0.8318850654617078 clean 0.7103174603174602 clove 0.6744366744366744 coconut 0.9970794392523364 coffee 0.9812646370023419 err coniferous 0.9625292740046838 cooked 0.8358287365379564 cooling 0.6711492564714522 cucumber 0.994199535962877 dairy 0.812675448067372 dry 0.7238095238095238 earthy 0.8255126868265554 ester 0.7302325581395349 ethereal 0.8611782071926999 fatty 0.8433014354066986 err fermented 0.602436974789916 floral 0.7697652483756026 fresh 0.7470848300635535 fruity 0.7830530354645534 geranium 0.6523255813953488 gourmand 0.954225352112676 grape 0.8097447795823666 grapefruit 0.9848837209302326 grass 0.7258411580594679 green 0.6896101240514383 herbal 0.6987903225806451 honey 0.822429906542056 hyacinth 0.9622950819672131 jasmin 0.9174491392801252 lactonic 0.6418026418026417 leaf 0.7687796208530806 leather 0.9984459984459985 lemon 0.77380695314187 lily 0.9566627358490567 liquor 0.6599531615925058 meat 0.9577294685990339 medicinal 0.9913928012519562 melon 0.8732311320754718 metallic 0.49393583724569634 mint 0.7727088402270883 mushroom 0.855154028436019 musk 0.88490606780393 musty 0.6343577620173365 nut 0.8486328125 odorless 0.8408018867924529 oily 0.8976111778846154 orange 0.8517214397496088 err pear 0.9222748815165877 pepper 0.780885780885781 phenolic 0.9590361445783133 plastic 0.7710280373831775 plum 0.8174418604651162 powdery 0.8420168067226891 pungent 0.666218487394958 rancid 0.6252100840336134 resinous 0.7452023674299038 ripe 0.888631090487239 roasted 0.8001984126984126 rose 0.845460827043691 seafood 0.8552325581395348 sharp 0.6262626262626263 smoky 0.9907192575406032 sour 0.8715966386554622 spicy 0.70349609375 sulfuric 0.9550457652647433 sweet 0.610172321667112 syrup 0.9761124121779859 terpenic 0.839907192575406 tobacco 0.7579225352112676 tropicalfruit 0.8007441530829199 vanilla 0.9428794992175273 vegetable 0.7854594112399643 violetflower 0.6685011709601874 watery 0.919392523364486 waxy 0.8652912621359223 whiteflower 0.8607888631090487 wine 0.6337236533957845 woody 0.7921620091606196

Let's compare how the model does in comparision to the groundtruth.

Note: y_pred is of the shape (n_samples,n_tasks,n_classes) with y_pred[:,:,1] corresponding to the probabilities for class 1.

y_true = val_dataset.y

y_pred = model.predict(val_dataset)

# print(y_true.shape,y_pred.shape)

for i in range(y_true.shape[0]):

final_pred = []

prob_val = []

for y in range(109):

prediction = y_pred[i,y]

if prediction[1] > 0.37:

final_pred.append(1)

prob_val.append(prediction[1])

else:

final_pred.append(0)

smell_ids = np.where(np.array(final_pred)==1)

smells = [vocab[k] for k in smell_ids[0]]

smells = [smells[k] for k in np.argsort(np.array(prob_val))] #to further order based on probability

gt_smell_ids = np.where(np.array(y_true[i])==1)

gt_smells = [vocab[k] for k in gt_smell_ids[0]]

print(smells,gt_smells)

['sweet', 'resinous', 'cinnamon', 'berry', 'fruity', 'balsamic'] ['balsamic', 'cinnamon', 'fruity', 'powdery', 'sweet'] ['spicy', 'woody'] ['dry', 'herbal'] ['sweet', 'resinous', 'fruity', 'phenolic'] ['floral', 'fruity', 'resinous', 'sweet'] ['herbal', 'ethereal', 'citrus', 'fresh', 'floral'] ['citrus', 'fresh', 'lily'] ['green', 'waxy', 'tropicalfruit', 'butter', 'banana', 'fruity', 'cognac'] ['green', 'pear'] ['fresh', 'floral'] ['geranium', 'leaf', 'spicy'] ['resinous', 'fruity', 'geranium', 'rose', 'honey'] ['berry', 'geranium', 'honey', 'powdery', 'waxy'] ['fermented', 'floral', 'balsamic', 'resinous', 'honey', 'rose'] ['floral', 'herbal', 'lemon', 'rose', 'spicy'] ['waxy', 'seafood', 'musty', 'ammoniac', 'chemical', 'cognac', 'aldehydic', 'ethereal', 'pungent', 'oily', 'terpenic'] ['chemical'] ['fresh', 'fermented', 'ester', 'ethereal', 'cognac', 'apple', 'banana', 'fruity'] ['apple', 'fermented'] ['meat', 'dairy', 'cooked', 'vegetable', 'alliaceous', 'sulfuric'] ['alliaceous', 'fresh', 'gourmand', 'metallic', 'spicy', 'sulfuric', 'vegetable'] ['fresh', 'floral', 'fruity', 'resinous'] ['green', 'herbal', 'jasmin'] ['lily', 'lemon', 'terpenic', 'floral', 'resinous'] ['coniferous', 'floral', 'resinous'] ['ethereal', 'floral', 'rose', 'fresh', 'herbal', 'berry', 'fruity', 'apple'] ['fruity', 'herbal'] ['camphor', 'mint'] ['mint'] ['resinous', 'chemical', 'medicinal', 'spicy', 'leather', 'phenolic'] ['medicinal', 'phenolic', 'resinous', 'spicy'] ['cacao', 'lemon', 'cognac', 'butter', 'cheese', 'fresh', 'fruity', 'mushroom', 'ethereal', 'fermented', 'alcoholic'] ['burnt', 'ethereal', 'fermented', 'fresh'] ['mint', 'floral', 'fresh'] ['melon', 'tobacco'] ['terpenic', 'woody'] ['chemical', 'resinous', 'rose'] ['grapefruit', 'fresh', 'terpenic', 'vanilla', 'woody', 'dairy', 'musk', 'gourmand', 'ambery', 'camphor'] ['woody'] ['gourmand', 'burnt', 'caramellic', 'phenolic', 'sour'] ['syrup'] ['fermented', 'floral', 'lily', 'herbal', 'ethereal', 'fresh'] ['clean', 'floral', 'fresh', 'grass', 'lily', 'resinous', 'spicy', 'sweet'] ['waxy', 'fresh', 'oily', 'rose', 'ethereal'] ['citrus', 'fresh', 'rose', 'waxy'] ['woody', 'ethereal', 'earthy', 'cedar', 'camphor'] ['camphor', 'clean', 'cooling', 'green', 'resinous'] ['oily', 'lily', 'grapefruit', 'leaf', 'fresh', 'citrus', 'aldehydic', 'lemon', 'herbal'] ['fruity', 'grass', 'green', 'herbal'] ['herbal', 'floral', 'fruity'] ['animalic', 'green', 'pear', 'tropicalfruit'] ['dairy', 'vanilla'] ['cedar', 'fresh', 'leaf', 'mint'] ['fresh', 'oily', 'fruity', 'pear'] ['floral', 'fresh', 'fruity', 'herbal'] ['caramellic', 'gourmand', 'meat', 'sulfuric', 'bread'] ['bread', 'fermented', 'gourmand', 'meat', 'nut', 'sulfuric'] ['blueberry', 'sweet', 'fresh', 'liquor', 'plum', 'apple', 'fruity', 'ethereal'] ['chemical', 'ethereal', 'fruity', 'sweet'] ['butter', 'banana', 'wine', 'dairy', 'fermented', 'sour', 'burnt', 'cheese', 'caramellic', 'fruity'] ['burnt', 'ester', 'fruity', 'pungent'] ['nut', 'earthy', 'gourmand', 'vegetable'] ['meat', 'roasted', 'vegetable'] ['fresh', 'citrus', 'aldehydic', 'floral'] ['orange'] ['mint', 'leather', 'burnt', 'cedar', 'body', 'cheese', 'sour'] ['balsamic', 'pungent', 'sour'] ['waxy', 'woody', 'floral'] ['balsamic', 'rose'] ['fruity', 'whiteflower', 'balsamic', 'medicinal'] ['cooling', 'medicinal', 'sweet'] ['leather', 'nut', 'earthy', 'coffee'] ['coffee', 'earthy', 'nut'] ['caramellic', 'nut', 'fruity'] ['fruity', 'tobacco'] ['mint', 'fresh', 'citrus'] ['mint'] ['ethereal', 'woody', 'ambery'] ['ambery', 'cedar', 'dry', 'ethereal', 'herbal'] ['camphor', 'woody', 'musk', 'earthy'] ['ambery', 'rancid', 'woody'] ['dairy', 'butter', 'cheese', 'sour'] ['odorless'] ['herbal', 'floral', 'lily', 'aldehydic', 'resinous', 'fresh'] ['fresh'] ['resinous', 'mint', 'fresh', 'spicy', 'camphor'] ['camphor', 'cedar'] ['gourmand', 'burnt', 'sulfuric', 'roasted', 'chemical', 'smoky', 'seafood', 'meat', 'alliaceous'] ['alliaceous', 'fruity', 'green'] ['herbal', 'caramellic', 'fruity', 'ethereal', 'fresh'] ['ethereal', 'fresh', 'fruity'] ['ethereal', 'tobacco', 'herbal', 'fruity'] ['floral', 'fruity'] ['fruity', 'herbal', 'pungent', 'fresh', 'fatty', 'aldehydic'] ['citrus', 'dairy', 'floral', 'fruity'] ['chemical', 'herbal', 'resinous', 'mint', 'pungent', 'terpenic'] ['roasted'] ['ethereal', 'fresh', 'ester', 'pear', 'fruity', 'apple', 'banana'] ['fruity'] ['chemical', 'banana', 'caramellic', 'burnt', 'sharp', 'butter', 'fruity'] ['burnt', 'caramellic', 'fruity', 'resinous'] ['banana', 'fresh', 'ester', 'fruity'] ['fatty', 'fruity'] ['alliaceous', 'metallic', 'vegetable', 'cheese', 'sulfuric', 'tropicalfruit'] ['alliaceous', 'tropicalfruit'] ['fruity', 'coconut', 'jasmin'] ['fruity', 'jasmin'] ['floral', 'waxy', 'aldehydic', 'green', 'leaf', 'fresh', 'rose', 'hyacinth'] ['green', 'hyacinth'] ['oily', 'lily', 'grapefruit', 'leaf', 'fresh', 'citrus', 'aldehydic', 'lemon', 'herbal'] ['grass', 'green', 'tobacco'] ['cheese', 'sour'] ['herbal', 'resinous'] ['ethereal', 'wine', 'liquor', 'caramellic', 'fruity', 'apple'] ['animalic', 'caramellic', 'fruity', 'herbal'] ['nut', 'dairy', 'spicy', 'fruity', 'mint', 'coconut'] ['chemical', 'fruity', 'herbal', 'pungent'] ['fruity', 'fresh', 'mint'] ['mint'] ['ethereal', 'tobacco', 'herbal', 'fruity'] ['herbal', 'tropicalfruit'] ['ethereal', 'herbal', 'fruity', 'fresh', 'mushroom'] ['green', 'liquor', 'mushroom', 'sweet'] ['vanilla', 'resinous', 'grass', 'medicinal', 'coconut', 'phenolic'] ['floral', 'fruity', 'resinous', 'sweet'] ['fresh', 'musk', 'mint'] ['chemical', 'mint', 'musk'] ['alcoholic', 'fresh', 'ethereal', 'fruity'] ['apple', 'fermented'] ['earthy', 'fruity'] ['herbal', 'spicy'] ['alliaceous', 'sulfuric'] ['burnt', 'resinous', 'sulfuric'] ['fresh', 'coffee', 'burnt'] ['coffee', 'woody'] ['fresh', 'melon', 'rancid', 'waxy', 'citrus', 'aldehydic', 'cucumber'] ['aldehydic', 'citrus'] ['phenolic', 'sour', 'balsamic', 'spicy', 'vanilla'] ['odorless'] ['pungent', 'meat', 'sulfuric', 'camphor', 'animalic', 'almond', 'vegetable', 'earthy', 'roasted', 'alcoholic', 'rancid', 'seafood', 'chemical'] ['ammoniac'] ['fresh', 'balsamic', 'resinous', 'cinnamon', 'honey', 'fermented', 'alcoholic', 'bread', 'rose'] ['balsamic', 'hyacinth'] ['herbal', 'lily', 'floral', 'alcoholic', 'fresh'] ['aldehydic', 'floral', 'grass', 'leaf'] ['tobacco', 'burnt', 'animalic', 'sulfuric', 'blackcurrant'] ['fermented', 'green', 'metallic', 'sulfuric', 'tropicalfruit', 'woody'] ['fatty', 'fruity', 'waxy', 'pear'] ['fruity', 'green', 'oily'] ['body', 'cheese', 'sour'] ['cheese', 'fruity'] ['sweet', 'dairy', 'cognac', 'grape', 'sour', 'fruity', 'fermented', 'ethereal', 'alcoholic'] ['alcoholic', 'chemical', 'ethereal'] ['caramellic'] ['odorless'] ['cheese', 'alcoholic', 'fermented', 'fresh', 'burnt', 'chemical', 'ethereal', 'fruity', 'banana'] ['chemical', 'dairy', 'fruity', 'green', 'herbal', 'sharp'] ['fruity', 'mushroom'] ['melon', 'mushroom', 'violetflower'] ['blueberry', 'fruity', 'citrus', 'rose'] ['citrus', 'fatty', 'floral'] ['fresh', 'herbal'] ['fresh', 'mint'] ['smoky', 'sulfuric', 'seafood', 'roasted', 'meat', 'gourmand', 'alliaceous'] ['meat', 'plastic'] ['burnt', 'tobacco', 'resinous', 'earthy', 'animalic', 'leather'] ['animalic', 'earthy', 'leather', 'tobacco', 'woody'] ['berry', 'violetflower', 'cucumber', 'grass', 'chemical', 'fruity'] ['nut'] ['fresh', 'fruity', 'blackcurrant'] ['balsamic', 'herbal', 'rancid'] ['citrus', 'sulfuric'] ['animalic', 'berry', 'green', 'mint', 'sulfuric'] ['earthy', 'tropicalfruit', 'smoky', 'vegetable', 'seafood', 'gourmand', 'nut', 'roasted', 'alliaceous', 'meat'] ['alliaceous', 'cooked', 'fatty', 'roasted', 'vegetable'] ['lily', 'lemon', 'terpenic', 'floral', 'resinous'] ['coniferous', 'floral', 'resinous'] ['gourmand', 'caramellic', 'nut', 'meat', 'bread'] ['ethereal', 'sweet'] ['banana', 'fresh', 'ethereal', 'fruity'] ['fruity', 'vegetable'] ['woody'] ['citrus', 'resinous', 'spicy'] ['herbal', 'floral', 'fruity', 'resinous'] ['floral', 'green', 'herbal', 'plum'] ['lily', 'honey', 'resinous', 'floral', 'rose', 'balsamic'] ['herbal', 'rose'] ['spicy', 'vanilla', 'leather', 'medicinal', 'smoky', 'phenolic'] ['medicinal', 'phenolic', 'spicy'] ['anisic', 'phenolic', 'cinnamon', 'sweet', 'cherry', 'vanilla', 'almond'] ['almond', 'balsamic', 'cherry', 'floral', 'sweet'] ['cooling', 'mint'] ['cooling', 'sweet'] ['green', 'terpenic', 'camphor', 'citrus'] ['citrus', 'earthy', 'green', 'mint', 'spicy', 'sweet', 'woody'] ['citrus', 'orange', 'violetflower', 'fruity', 'resinous', 'floral', 'lily'] ['floral', 'lemon', 'orange'] ['lactonic', 'fruity'] ['floral', 'fruity', 'woody'] ['herbal', 'lemon', 'citrus'] ['aldehydic', 'dry', 'green', 'lemon', 'orange'] ['cooked', 'meat', 'gourmand'] ['cheese', 'gourmand', 'meat'] ['honey', 'green', 'fruity', 'sweet', 'herbal', 'rose', 'cherry', 'almond', 'hyacinth'] ['chemical', 'floral', 'pungent', 'resinous'] ['medicinal', 'vanilla', 'phenolic'] ['floral', 'herbal', 'sweet'] ['camphor', 'woody', 'earthy'] ['ambery', 'tobacco', 'woody'] ['cedar', 'floral', 'ambergris', 'violetflower', 'woody'] ['balsamic', 'woody'] ['berry', 'violetflower', 'cucumber', 'grass', 'chemical', 'fruity'] ['dairy', 'mushroom'] ['body', 'fruity', 'pungent', 'sour', 'cheese'] ['meat', 'oily', 'roasted', 'sour'] ['sweet', 'cherry', 'almond'] ['almond', 'cherry', 'spicy', 'sweet'] ['sweet', 'phenolic', 'blackcurrant', 'cinnamon'] ['phenolic', 'spicy'] ['fermented', 'cheese', 'cognac', 'musty', 'apple', 'banana', 'butter', 'fruity'] ['banana', 'meat', 'ripe'] ['burnt', 'bread', 'fruity', 'berry', 'syrup', 'butter', 'caramellic'] ['berry', 'caramellic', 'clean'] ['fresh', 'ethereal', 'body', 'burnt', 'fruity'] ['pungent', 'vegetable'] ['sulfuric', 'spicy'] ['herbal', 'spicy', 'sulfuric', 'vegetable'] ['fresh', 'floral', 'fruity', 'resinous'] ['floral', 'fresh', 'fruity', 'resinous'] ['metallic', 'tropicalfruit', 'cacao', 'alliaceous', 'resinous', 'syrup', 'green', 'leaf', 'roasted', 'meat', 'vegetable'] ['cooked', 'meat', 'nut'] ['fruity'] ['fatty', 'floral', 'sweet'] ['odorless', 'waxy', 'cognac', 'fruity', 'oily'] ['oily'] ['oily', 'fruity'] ['liquor', 'oily', 'wine'] ['honey', 'apple', 'mint', 'fruity'] ['fresh', 'herbal', 'woody'] ['fruity', 'woody', 'mint'] ['camphor', 'musty', 'woody'] ['sharp', 'body', 'pungent', 'lemon', 'pepper', 'fresh', 'chemical', 'ethereal', 'terpenic'] ['chemical', 'ethereal'] ['sweet', 'phenolic', 'resinous', 'balsamic'] ['balsamic', 'floral', 'herbal'] ['lemon', 'fresh', 'herbal'] ['aldehydic', 'floral', 'herbal'] ['pear', 'pungent', 'green', 'tropicalfruit', 'butter', 'banana', 'fruity', 'apple', 'cognac'] ['apple', 'banana', 'fatty'] ['sweet', 'pungent', 'pear', 'blueberry', 'ethereal', 'ester', 'fruity', 'wine', 'fermented', 'banana', 'apple'] ['apple', 'banana'] ['waxy', 'cognac', 'fruity', 'rose'] ['citrus', 'fatty', 'floral', 'fresh'] ['phenolic', 'medicinal'] ['phenolic', 'plastic'] ['alliaceous', 'spicy', 'vegetable'] ['spicy', 'sulfuric'] ['leaf', 'waxy', 'oily', 'vegetable', 'melon', 'cucumber'] ['cucumber', 'green', 'melon', 'mushroom', 'oily', 'seafood'] ['mint', 'phenolic'] ['burnt', 'herbal', 'phenolic', 'sweet'] ['butter', 'fruity', 'wine', 'apple'] ['apple'] ['grass', 'woody', 'camphor', 'liquor', 'ethereal', 'fresh'] ['camphor', 'woody'] ['woody', 'fruity', 'tobacco', 'musk', 'berry'] ['syrup', 'woody'] ['green', 'banana', 'apple', 'pear'] ['green', 'pear', 'resinous', 'tropicalfruit'] ['resinous', 'animalic', 'musk', 'earthy'] ['balsamic', 'phenolic'] ['camphor'] ['alliaceous', 'roasted'] ['mint', 'resinous', 'cedar', 'camphor'] ['camphor', 'fresh'] ['fruity', 'waxy', 'oily', 'pear'] ['fruity', 'oily'] ['herbal', 'fruity', 'green', 'fresh'] ['ethereal', 'fatty', 'liquor'] ['musk', 'mint'] ['herbal'] ['fruity', 'resinous', 'cinnamon', 'leather', 'cacao', 'almond', 'bread', 'caramellic', 'coffee', 'burnt'] ['bread', 'caramellic', 'phenolic', 'woody'] ['mushroom', 'waxy', 'fatty', 'fruity', 'pear'] ['green', 'melon', 'pear', 'tropicalfruit', 'waxy'] ['resinous', 'apple', 'ester', 'butter', 'blackcurrant', 'sour', 'sweet', 'berry', 'cherry', 'fruity', 'cinnamon', 'fermented', 'balsamic', 'blueberry'] ['balsamic', 'fruity'] ['dairy', 'fruity', 'oily', 'musk', 'woody', 'animalic'] ['ambergris', 'animalic', 'anisic', 'clean', 'fatty', 'musk', 'powdery'] ['fresh', 'fruity'] ['floral', 'green', 'herbal'] ['fruity', 'mint'] ['cooling', 'tropicalfruit', 'woody'] ['fruity', 'coniferous', 'resinous', 'camphor'] ['camphor', 'nut', 'resinous'] ['spicy', 'phenolic'] ['floral', 'mint', 'sweet', 'violetflower', 'woody'] ['oily', 'fruity', 'dairy', 'melon', 'aldehydic', 'cucumber'] ['fruity'] ['resinous'] ['rose'] ['cherry', 'sweet', 'anisic'] ['anisic'] ['sweet', 'camphor', 'mint'] ['mint'] ['roasted', 'berry', 'cheese', 'sour', 'grass', 'mint', 'coconut', 'syrup', 'phenolic', 'caramellic', 'bread', 'burnt'] ['body', 'gourmand', 'nut', 'spicy', 'syrup'] ['orange', 'resinous'] ['aldehydic', 'citrus', 'green'] ['rose', 'tobacco', 'fruity', 'honey'] ['honey', 'rose'] ['spicy', 'cinnamon', 'sweet', 'balsamic'] ['balsamic', 'spicy'] ['medicinal', 'balsamic', 'earthy', 'resinous', 'camphor'] ['camphor', 'coniferous', 'cooling'] ['ester', 'fermented', 'cognac', 'butter', 'cheese', 'apple', 'fruity', 'banana'] ['butter', 'fruity'] ['fruity', 'hyacinth', 'rose'] ['balsamic', 'floral'] ['citrus', 'woody', 'spicy', 'fresh', 'camphor'] ['fresh', 'mint'] ['fermented', 'sweet', 'banana', 'apple', 'ethereal', 'fruity'] ['banana', 'cheese', 'tropicalfruit'] ['woody', 'herbal', 'spicy', 'fresh', 'resinous'] ['aldehydic', 'fresh', 'herbal'] ['medicinal', 'coffee', 'meat', 'burnt', 'seafood', 'sulfuric', 'alliaceous'] ['burnt', 'caramellic', 'coffee'] ['resinous', 'sweet'] ['balsamic', 'musk'] ['woody'] ['clean', 'fresh', 'herbal'] ['fruity', 'fresh', 'mint'] ['camphor'] ['lily', 'fresh', 'ester', 'woody', 'citrus', 'ethereal', 'fruity', 'lemon', 'floral'] ['ethereal', 'floral', 'lemon'] ['waxy', 'rose', 'pear', 'fruity', 'ester', 'banana'] ['banana', 'body', 'fermented', 'green', 'meat', 'melon'] ['herbal', 'burnt', 'ethereal', 'fresh', 'fruity'] ['berry', 'cheese', 'green', 'herbal'] ['herbal', 'cooling', 'mint', 'fruity'] ['cooling', 'fruity'] ['musty', 'bread', 'meat', 'seafood', 'roasted', 'smoky', 'phenolic', 'vegetable', 'earthy', 'caramellic', 'burnt', 'cacao', 'coffee', 'body', 'nut'] ['cacao', 'caramellic', 'dry', 'musty', 'nut', 'vanilla'] ['herbal', 'balsamic', 'resinous', 'fruity', 'woody'] ['cedar', 'fresh', 'sharp'] ['aldehydic', 'metallic', 'ethereal', 'citrus', 'lemon'] ['herbal', 'lemon'] ['terpenic', 'fresh', 'camphor'] ['camphor', 'resinous'] ['herbal', 'woody', 'blackcurrant', 'camphor', 'leaf', 'tobacco', 'cooling'] ['pepper', 'woody'] ['resinous', 'balsamic', 'honey', 'rose', 'fruity'] ['herbal', 'sweet', 'wine'] ['tropicalfruit', 'fruity'] ['burnt', 'fruity', 'herbal'] ['berry', 'cedar', 'woody', 'ambergris'] ['ambery', 'woody'] ['fruity', 'apple', 'wine'] ['tropicalfruit', 'wine'] ['tropicalfruit', 'fruity'] ['apple', 'berry'] ['cinnamon', 'chemical', 'phenolic', 'bread', 'blackcurrant', 'camphor', 'anisic'] ['floral', 'orange', 'resinous', 'sweet'] ['sweet', 'nut', 'tobacco', 'caramellic', 'ethereal', 'fruity'] ['burnt', 'caramellic', 'fresh', 'fruity'] ['tropicalfruit', 'meat', 'alliaceous', 'berry', 'blackcurrant', 'sulfuric'] ['floral', 'grapefruit', 'lemon'] ['fresh', 'alcoholic', 'ethereal', 'fermented'] ['citrus', 'floral', 'fresh', 'oily', 'sweet'] ['sulfuric', 'tropicalfruit', 'alliaceous', 'vegetable'] ['cheese', 'sulfuric', 'tropicalfruit', 'vegetable'] ['clove', 'medicinal', 'vanilla', 'leather', 'phenolic'] ['phenolic', 'spicy'] ['gourmand', 'burnt', 'fruity', 'roasted', 'meat', 'cooked'] ['caramellic', 'dairy'] ['green', 'woody'] ['anisic', 'floral', 'fruity', 'woody'] [] ['herbal', 'spicy'] ['ambergris', 'ethereal', 'floral', 'woody', 'violetflower'] ['floral', 'fruity', 'woody'] ['floral', 'geranium', 'fresh', 'citrus', 'fruity', 'rose'] ['floral', 'fresh', 'fruity'] [] ['aldehydic', 'fresh', 'herbal', 'woody'] ['fruity', 'coconut'] ['coconut', 'dairy', 'fruity'] ['earthy', 'camphor', 'anisic', 'green', 'spicy', 'woody'] ['clove', 'herbal', 'rose', 'woody'] ['animalic', 'gourmand', 'bread', 'earthy', 'terpenic'] ['balsamic', 'earthy', 'green', 'musk'] ['fermented', 'alcoholic', 'oily', 'ethereal', 'cheese', 'fruity', 'mushroom'] ['fresh', 'oily'] ['resinous', 'floral', 'aldehydic', 'lily', 'fresh'] ['clean', 'fresh'] ['honey', 'balsamic', 'fruity', 'rose'] ['dry', 'herbal', 'rose'] ['ethereal', 'fruity', 'apple'] ['fruity', 'green', 'musty', 'pungent'] ['fresh', 'herbal', 'woody', 'terpenic'] ['fresh', 'herbal', 'waxy'] ['woody', 'ambery'] ['ambery', 'herbal', 'woody'] ['waxy', 'cognac', 'fruity', 'rose'] ['rose', 'tropicalfruit'] ['grapefruit', 'cooling', 'mint'] ['dry', 'herbal'] ['anisic', 'chemical', 'apple', 'alliaceous', 'liquor', 'ethereal', 'fruity'] ['chemical', 'ethereal', 'fresh', 'fruity', 'plastic'] ['floral', 'lemon'] ['floral', 'green', 'lemon'] ['waxy', 'oily', 'pear', 'rose', 'fruity'] ['citrus', 'fatty', 'waxy'] ['fatty', 'geranium', 'fermented', 'ethereal', 'fresh', 'citrus', 'rose'] ['fatty', 'floral', 'mint'] ['vanilla', 'syrup', 'coconut', 'mint'] ['coconut', 'cooling', 'fruity'] ['overripe', 'dairy', 'ethereal', 'fermented', 'fruity', 'cheese', 'alcoholic', 'mushroom'] ['mushroom', 'musty', 'oily'] ['cognac', 'body', 'fresh', 'ripe', 'fruity', 'pear', 'apple', 'ethereal', 'banana'] ['apple', 'balsamic', 'banana', 'pear', 'woody'] ['nut', 'earthy'] ['herbal', 'nut'] ['coffee', 'cooked', 'vegetable', 'sulfuric', 'gourmand', 'meat', 'alliaceous'] ['cooked', 'dairy', 'roasted'] ['butter', 'gourmand', 'bread', 'caramellic', 'syrup'] ['clean', 'syrup'] ['cedar', 'fruity', 'berry', 'woody', 'violetflower'] ['berry', 'green', 'sweet', 'woody'] ['tropicalfruit', 'cheese', 'dairy', 'fruity', 'alliaceous', 'vegetable', 'meat', 'sulfuric'] ['animalic', 'grape', 'rancid'] ['phenolic', 'coffee', 'animalic', 'leather', 'earthy', 'burnt'] ['floral', 'resinous'] ['jasmin', 'phenolic', 'fruity', 'fatty', 'balsamic', 'animalic'] ['balsamic', 'nut', 'vanilla'] ['camphor', 'cooling', 'terpenic', 'sulfuric'] ['citrus', 'resinous', 'sulfuric'] ['cooked', 'alliaceous'] ['alliaceous', 'earthy', 'spicy', 'sulfuric', 'vegetable'] ['sweet', 'smoky', 'resinous', 'green', 'cherry', 'fruity', 'balsamic'] ['balsamic', 'berry', 'cacao', 'green', 'herbal', 'whiteflower', 'woody'] ['butter', 'caramellic', 'sour'] ['sour', 'spicy', 'vegetable'] ['tropicalfruit', 'green', 'pear', 'fruity', 'apple', 'banana'] ['dairy', 'fresh', 'fruity', 'green'] ['melon', 'fruity', 'earthy', 'fresh', 'ethereal', 'grass', 'alcoholic', 'leaf', 'vegetable'] ['fresh', 'leaf'] ['floral', 'cinnamon', 'fruity', 'balsamic'] ['balsamic', 'fruity'] ['fresh', 'balsamic', 'honey', 'resinous', 'floral', 'rose', 'lily'] ['rose'] ['leaf', 'waxy', 'oily', 'vegetable', 'melon', 'cucumber'] ['floral', 'melon', 'oily', 'vegetable'] ['woody', 'resinous', 'fruity'] ['ester', 'fruity', 'woody'] ['fresh', 'fruity'] ['aldehydic', 'ambery', 'green', 'lemon', 'waxy'] ['fresh', 'herbal', 'coconut', 'woody', 'rancid', 'fruity'] ['floral', 'fresh', 'fruity', 'mint'] ['ambrette', 'dry', 'musk'] ['fruity', 'leaf', 'violetflower'] ['herbal', 'fruity', 'coconut'] ['coconut', 'dairy', 'herbal', 'lactonic'] ['herbal', 'jasmin', 'sweet', 'fruity', 'woody'] ['earthy', 'herbal'] ['fruity'] ['apple'] ['grapefruit', 'fresh', 'floral'] ['balsamic', 'woody'] ['nut', 'phenolic', 'meat'] ['caramellic', 'cooked', 'meat'] ['terpenic', 'oily'] ['waxy'] ['herbal', 'fresh', 'resinous', 'pungent', 'camphor', 'coniferous', 'terpenic'] ['camphor'] ['gourmand', 'nut', 'green', 'earthy', 'alliaceous', 'vegetable', 'meat'] ['mint', 'vegetable'] ['fruity', 'cinnamon', 'caramellic', 'leather', 'coffee', 'burnt'] ['resinous', 'sweet'] ['alcoholic', 'earthy', 'musk'] ['earthy', 'musk'] ['lily', 'lemon', 'terpenic', 'floral', 'resinous'] ['floral'] ['jasmin', 'resinous'] ['aldehydic', 'ethereal', 'fruity', 'jasmin'] ['oily', 'fruity'] ['jasmin', 'oily', 'watery'] ['butter', 'plum', 'lactonic', 'berry', 'fruity'] ['apple', 'fruity', 'green', 'resinous'] ['sweet', 'almond', 'cherry', 'balsamic', 'cinnamon'] ['blackcurrant', 'cinnamon'] ['chemical', 'terpenic', 'green', 'oily', 'cucumber'] ['green', 'resinous'] ['banana', 'cheese', 'butter', 'fruity'] ['apple', 'banana', 'berry'] ['fermented', 'pungent', 'butter', 'fruity', 'cheese', 'sour'] ['body', 'cheese', 'sour'] ['cheese', 'sulfuric', 'cooked', 'dairy', 'meat', 'gourmand', 'alliaceous'] ['sulfuric', 'sweet'] ['rose', 'green'] ['earthy', 'floral', 'sweet'] ['rose', 'seafood', 'meat', 'roasted', 'sulfuric', 'ammoniac', 'plastic', 'burnt', 'chemical', 'alliaceous'] ['burnt', 'earthy', 'sulfuric'] ['blueberry', 'waxy', 'fruity', 'floral', 'citrus', 'lily', 'fresh'] ['citrus', 'earthy', 'floral'] ['odorless', 'fruity'] ['balsamic', 'clean', 'green', 'plastic'] ['fermented', 'alcoholic', 'oily', 'ethereal', 'cheese', 'fruity', 'mushroom'] ['floral', 'grass', 'lemon', 'sweet'] ['herbal', 'balsamic', 'mint', 'spicy', 'woody'] ['herbal', 'spicy', 'woody'] ['gourmand', 'roasted', 'meat', 'alliaceous', 'sulfuric', 'burnt'] ['meat', 'roasted', 'sulfuric'] ['cinnamon', 'resinous', 'body', 'balsamic', 'tobacco', 'leather', 'animalic', 'sour'] ['balsamic', 'burnt', 'sour'] ['resinous', 'chemical', 'animalic', 'floral'] ['floral', 'jasmin', 'seafood'] ['sweet', 'vanilla', 'medicinal', 'phenolic'] ['medicinal', 'phenolic', 'sweet'] ['vegetable', 'nut', 'bread', 'earthy'] ['earthy', 'floral', 'nut', 'pepper'] ['grape', 'caramellic', 'burnt', 'fruity'] ['caramellic', 'fruity'] ['burnt', 'alliaceous', 'sulfuric'] ['caramellic'] ['earthy', 'musty', 'coffee', 'burnt', 'caramellic', 'cacao', 'nut'] ['green', 'resinous', 'roasted'] ['earthy', 'nut', 'coffee'] ['butter', 'cooked', 'earthy'] ['fresh', 'aldehydic', 'lily', 'floral', 'citrus'] ['aldehydic', 'hyacinth', 'lily', 'watery', 'waxy'] ['fresh', 'fermented', 'alcoholic', 'odorless'] ['odorless'] ['burnt', 'leather', 'coffee', 'medicinal', 'smoky', 'phenolic'] ['phenolic', 'spicy'] ['violetflower', 'woody'] ['ambergris', 'ambery', 'animalic', 'dry', 'fresh', 'metallic'] ['fresh', 'ethereal', 'caramellic', 'fruity'] ['alcoholic', 'ethereal', 'musty', 'nut'] ['hyacinth', 'fruity', 'honey'] ['honey', 'resinous'] ['resinous', 'balsamic', 'rose', 'fruity'] ['balsamic', 'fruity'] ['fruity'] ['floral', 'fresh', 'fruity'] ['burnt', 'alliaceous', 'sulfuric', 'roasted', 'meat'] ['meat'] ['sweet', 'ethereal', 'fruity', 'banana'] ['banana', 'melon', 'pear'] ['bread', 'berry', 'syrup', 'caramellic'] ['bread', 'burnt', 'syrup'] ['violetflower', 'floral', 'woody'] ['woody'] ['resinous', 'grape', 'bread', 'caramellic', 'ethereal', 'burnt', 'fruity', 'mushroom'] ['berry', 'wine'] ['cooked'] ['odorless'] ['banana', 'ester', 'body', 'fruity', 'nut', 'sour', 'butter', 'cheese'] ['fruity'] ['rose', 'floral', 'fresh', 'aldehydic', 'citrus'] ['aldehydic', 'floral', 'watery', 'waxy'] ['fresh', 'ethereal', 'banana', 'fruity'] ['pear', 'rose'] ['aldehydic', 'pungent', 'cucumber', 'chemical', 'ethereal'] ['aldehydic', 'ethereal', 'fresh'] ['clean', 'fresh', 'herbal'] ['fresh', 'green', 'mint', 'rose'] ['woody', 'balsamic', 'fruity', 'floral'] ['fruity', 'resinous', 'roasted'] ['vegetable', 'body', 'burnt', 'caramellic', 'earthy', 'cacao', 'coffee', 'nut'] ['cacao', 'earthy', 'nut'] ['mint', 'fresh', 'camphor'] ['cooling', 'musty', 'spicy'] ['coniferous', 'resinous', 'camphor'] ['camphor', 'rancid', 'resinous'] ['mint', 'fresh'] ['clean', 'floral', 'fresh', 'grass', 'lily', 'resinous', 'spicy', 'sweet'] ['fruity', 'resinous'] ['fruity', 'herbal', 'resinous', 'rose'] ['earthy', 'mint', 'fruity', 'jasmin'] ['herbal', 'jasmin', 'oily'] ['herbal', 'leaf', 'hyacinth', 'rose'] ['leaf', 'vegetable'] ['geranium', 'citrus', 'apple', 'fruity', 'rose'] ['apple', 'floral'] ['vegetable', 'meat', 'sulfuric', 'alliaceous'] ['green', 'sulfuric', 'sweet'] ['almond', 'smoky', 'chemical', 'medicinal', 'coffee', 'leather', 'phenolic'] ['earthy', 'leather', 'medicinal', 'phenolic'] ['fresh', 'fruity'] ['green', 'mushroom', 'oily', 'sweet'] ['fresh', 'rose', 'citrus', 'fruity', 'floral'] ['green', 'pear', 'rose', 'tropicalfruit', 'waxy', 'woody'] ['fresh', 'coniferous', 'resinous', 'camphor'] ['herbal'] ['waxy', 'geranium', 'floral', 'banana', 'fruity', 'rose'] ['cooling', 'green', 'herbal', 'rose', 'waxy'] ['mint'] ['odorless'] ['body', 'ethereal', 'nut', 'burnt', 'seafood', 'roasted'] ['bread', 'nut', 'vegetable', 'woody'] ['phenolic', 'syrup', 'fruity', 'earthy', 'coconut'] ['mint', 'spicy'] ['cooling', 'mint'] ['mint'] ['fruity', 'butter', 'coconut'] ['coconut', 'dairy', 'fruity', 'resinous'] ['fatty', 'dairy'] ['waxy', 'woody'] ['chemical', 'fruity', 'caramellic', 'ethereal'] ['burnt', 'fresh', 'fruity', 'sweet'] [] ['odorless'] ['apple', 'balsamic', 'wine', 'geranium', 'caramellic', 'herbal', 'fruity'] ['fruity', 'herbal'] ['green', 'chemical', 'waxy', 'vegetable', 'mushroom', 'fruity', 'pear'] ['body', 'cooked', 'green', 'pear'] ['cinnamon', 'vanilla'] ['vanilla'] ['berry', 'meat', 'tropicalfruit', 'alliaceous', 'blackcurrant', 'sulfuric'] ['roasted', 'sweet', 'vegetable'] ['pear', 'liquor', 'burnt', 'grape', 'alliaceous', 'ethereal', 'apple', 'caramellic', 'chemical', 'fruity'] ['chemical', 'fruity'] ['fruity', 'apple'] ['cheese', 'green', 'spicy', 'tropicalfruit', 'woody'] ['dairy', 'burnt', 'earthy', 'cheese', 'metallic', 'vegetable', 'sulfuric', 'alliaceous'] ['blackcurrant', 'burnt', 'sulfuric', 'vegetable'] ['fruity', 'woody', 'floral'] ['ambery', 'ambrette', 'dry'] ['woody', 'terpenic', 'camphor'] ['woody'] ['floral'] ['clean', 'fresh'] ['green', 'apple', 'banana', 'fruity'] ['apple', 'herbal'] ['herbal', 'floral', 'fresh', 'woody', 'fruity'] ['apple', 'herbal', 'woody'] ['alliaceous', 'cooked', 'burnt', 'ethereal', 'seafood', 'ammoniac', 'chemical'] ['alliaceous', 'cheese', 'cooked', 'mushroom'] ['resinous', 'floral', 'phenolic'] ['earthy', 'spicy'] ['caramellic', 'odorless'] ['odorless'] ['fruity', 'floral', 'lily'] ['citrus', 'fresh', 'lily'] ['lily', 'spicy', 'floral', 'fresh', 'resinous'] ['floral', 'fresh', 'fruity', 'green', 'musty'] ['fermented', 'cooked', 'blackcurrant', 'butter', 'sour', 'gourmand', 'burnt', 'caramellic', 'berry', 'phenolic', 'bread', 'syrup'] ['caramellic', 'fruity', 'resinous'] ['sweet', 'phenolic', 'smoky', 'vanilla'] ['vanilla'] ['musk', 'herbal', 'mint'] ['mint', 'musk', 'spicy'] ['leather', 'sweet', 'coconut', 'dairy', 'fruity', 'almond', 'vanilla', 'medicinal'] ['floral', 'medicinal', 'phenolic'] ['grapefruit', 'floral', 'fruity', 'resinous'] ['balsamic', 'fruity', 'green', 'resinous', 'woody'] ['floral', 'fresh', 'lily'] ['citrus', 'floral', 'green', 'melon'] ['fermented', 'fruity', 'fresh', 'apple', 'banana', 'alcoholic', 'ethereal'] ['ethereal', 'fresh', 'fruity'] ['vegetable', 'chemical', 'alliaceous'] ['ethereal', 'sulfuric'] ['fatty', 'fruity', 'sour'] ['butter'] ['floral', 'sweet', 'resinous', 'honey', 'balsamic', 'fruity'] ['fruity', 'musty', 'resinous'] ['fermented', 'sour', 'caramellic', 'fruity'] ['berry', 'green', 'herbal', 'honey', 'tropicalfruit'] ['medicinal', 'phenolic', 'leather'] ['leather', 'phenolic', 'terpenic'] ['citrus', 'green', 'woody', 'fresh', 'lily', 'herbal', 'lemon', 'fruity', 'floral'] ['citrus', 'lemon'] ['floral', 'lily'] ['ethereal', 'lily'] ['woody'] ['earthy', 'woody'] ['medicinal', 'vanilla', 'resinous', 'fruity', 'spicy', 'phenolic'] ['fruity', 'herbal', 'phenolic', 'spicy'] ['fruity', 'pungent', 'sour'] ['earthy'] ['waxy', 'floral'] ['balsamic', 'floral'] ['roasted', 'coffee', 'plastic', 'phenolic', 'medicinal', 'sulfuric', 'seafood', 'alliaceous', 'meat', 'chemical'] ['meat', 'metallic', 'phenolic', 'roasted', 'sulfuric'] ['musk', 'camphor', 'earthy'] ['balsamic', 'earthy', 'green', 'liquor', 'musk', 'resinous'] ['lemon', 'fresh', 'herbal', 'fruity', 'banana'] ['floral', 'fresh', 'fruity', 'herbal'] ['floral', 'berry', 'citrus', 'fruity', 'rose', 'geranium'] ['floral', 'fresh', 'fruity'] ['herbal', 'leaf', 'hyacinth', 'rose'] ['hyacinth', 'rose'] ['waxy', 'rancid', 'orange', 'fatty', 'aldehydic', 'citrus', 'oily'] ['green', 'herbal', 'orange'] ['sweet', 'fresh', 'fruity', 'alcoholic', 'butter'] ['alcoholic', 'cacao', 'cheese', 'liquor', 'vegetable'] ['earthy', 'woody', 'cooling', 'camphor'] ['camphor', 'cooling', 'resinous'] ['aldehydic', 'lemon', 'floral', 'citrus'] ['citrus', 'fresh', 'herbal'] ['vanilla'] ['floral', 'vanilla'] ['terpenic', 'coniferous', 'resinous', 'camphor'] ['camphor'] ['seafood', 'earthy', 'vegetable', 'meat', 'alliaceous', 'sulfuric', 'bread', 'gourmand', 'nut', 'coffee'] ['vegetable'] ['spicy', 'vanilla', 'clove', 'medicinal', 'phenolic', 'smoky'] ['clove', 'sweet', 'woody'] ['ethereal', 'woody'] ['animalic', 'fresh', 'fruity', 'green', 'herbal', 'sour', 'spicy', 'woody'] ['phenolic', 'spicy', 'dairy', 'vanilla'] ['resinous', 'woody'] ['resinous', 'camphor'] ['camphor', 'coniferous', 'resinous'] ['oily', 'rose', 'fruity'] ['leaf', 'rose', 'waxy'] ['tropicalfruit'] ['apple', 'tropicalfruit', 'waxy'] ['green', 'resinous', 'earthy', 'vegetable', 'pepper'] ['green', 'woody'] ['floral', 'sweet', 'resinous', 'phenolic', 'fruity'] ['balsamic', 'berry', 'powdery'] ['woody', 'fruity', 'herbal', 'resinous'] ['oily', 'resinous'] ['leather', 'vanilla', 'smoky', 'medicinal', 'phenolic'] ['phenolic'] ['fruity', 'mint'] ['fruity', 'mint'] ['resinous', 'herbal', 'woody', 'floral', 'fruity'] ['fruity', 'rose'] ['herbal', 'burnt', 'ethereal', 'fresh', 'fruity'] ['berry', 'cheese', 'sweet'] ['leaf', 'fruity', 'green'] ['leaf', 'mushroom', 'violetflower'] ['herbal', 'camphor', 'spicy'] ['woody'] ['burnt', 'vegetable', 'earthy', 'nut', 'meat'] ['coffee', 'earthy', 'meat', 'nut'] ['powdery', 'sweet', 'cherry', 'cinnamon', 'almond', 'vanilla'] ['dairy', 'herbal', 'phenolic', 'powdery', 'vanilla'] ['rose', 'fresh', 'citrus', 'lily', 'floral'] ['aldehydic', 'waxy'] ['ethereal', 'bread', 'floral', 'lily', 'resinous'] ['clean', 'fruity', 'green', 'oily', 'rose'] ['fresh', 'mint'] ['herbal', 'sweet', 'tobacco'] ['woody', 'alcoholic', 'musk', 'earthy'] ['earthy', 'rancid', 'watery', 'woody'] ['chemical', 'spicy', 'smoky', 'medicinal', 'leather', 'phenolic'] ['camphor', 'cooling', 'smoky'] ['resinous', 'camphor'] ['balsamic', 'chemical', 'mint', 'resinous', 'sweet'] ['floral', 'fruity', 'citrus'] ['green', 'metallic', 'orange', 'rancid', 'sulfuric', 'waxy'] ['earthy', 'woody'] ['ambery', 'animalic', 'musk'] ['apple', 'oily', 'butter', 'banana', 'plum', 'fruity', 'pear'] ['berry', 'clove', 'herbal'] ['oily', 'cognac', 'fruity'] ['fruity', 'green', 'musty', 'waxy'] ['tobacco', 'burnt', 'animalic', 'sulfuric', 'blackcurrant'] ['blackcurrant', 'powdery', 'spicy', 'sulfuric'] ['alliaceous', 'fruity', 'ethereal'] ['cacao', 'resinous'] ['ripe', 'vegetable', 'fresh', 'apple', 'fruity', 'ethereal'] ['aldehydic', 'ethereal', 'fresh', 'fruity'] ['mushroom', 'fresh', 'fruity', 'ethereal', 'fermented'] ['green'] ['fruity', 'mushroom', 'green', 'sweet', 'alcoholic', 'ethereal', 'resinous', 'leaf'] ['hyacinth', 'leaf', 'mushroom', 'nut'] ['dairy', 'meat', 'sulfuric', 'mushroom', 'earthy', 'alliaceous', 'vegetable'] ['meat'] ['ethereal', 'oily', 'woody', 'ester', 'banana', 'cinnamon'] ['camphor', 'floral', 'fruity', 'woody'] ['resinous', 'fruity', 'cinnamon', 'balsamic'] ['cherry', 'cooling', 'floral', 'green', 'spicy', 'sweet'] ['floral', 'fruity', 'geranium', 'rose', 'fresh', 'citrus'] ['citrus', 'floral', 'leaf', 'sweet'] [] ['herbal', 'vegetable'] ['cognac', 'fermented', 'body', 'sour', 'cheese', 'fruity', 'apple', 'ethereal', 'chemical', 'banana'] ['butter', 'fruity'] ['fatty', 'odorless', 'waxy', 'fruity', 'oily'] ['fruity', 'oily'] ['green', 'sharp', 'fresh', 'pungent', 'ethereal', 'fruity'] ['almond', 'fruity', 'green', 'herbal', 'sweet'] ['green', 'camphor', 'woody', 'anisic'] ['floral', 'woody'] ['wine', 'berry', 'plum', 'cooling', 'honey', 'blueberry', 'rose', 'fruity', 'fermented'] ['balsamic', 'fruity', 'rose'] ['oily', 'fruity'] ['butter', 'floral', 'wine'] ['oily', 'animalic', 'fruity', 'waxy', 'musk'] ['animalic', 'clean', 'dry', 'metallic', 'musk', 'powdery', 'tropicalfruit', 'waxy'] ['meat', 'sulfuric'] ['fatty', 'gourmand', 'meat', 'sulfuric'] ['hyacinth', 'floral', 'cinnamon'] ['cinnamon'] ['fresh', 'powdery', 'camphor', 'mint', 'resinous', 'apple', 'violetflower', 'ethereal', 'chemical'] ['ambery', 'chemical', 'rancid', 'violetflower', 'woody'] ['green', 'woody', 'citrus', 'fresh', 'herbal', 'resinous', 'floral'] ['citrus', 'floral', 'resinous'] ['orange', 'fresh', 'citrus', 'floral', 'lily'] ['fruity', 'green', 'lily', 'woody'] ['floral', 'herbal', 'grapefruit', 'fresh', 'resinous'] ['lily', 'resinous'] ['butter', 'sour'] ['caramellic', 'dairy', 'fruity'] ['nut', 'vegetable', 'earthy'] ['earthy', 'floral', 'green', 'pepper', 'resinous'] ['pungent', 'almond', 'fresh', 'cucumber', 'leaf', 'vegetable'] ['almond', 'cheese'] ['phenolic', 'smoky', 'balsamic', 'almond', 'resinous', 'powdery', 'sweet', 'vanilla', 'anisic'] ['almond', 'floral', 'spicy'] ['nut', 'earthy', 'caramellic', 'burnt', 'cacao', 'coffee'] ['coffee', 'cooked', 'meat', 'nut', 'sulfuric'] ['floral', 'woody', 'berry'] ['berry', 'cedar', 'floral', 'lactonic'] ['odorless', 'fruity'] ['sour'] ['leaf', 'fruity', 'green'] ['green'] [] ['animalic', 'green', 'woody'] ['berry', 'mint', 'blackcurrant'] ['blackcurrant', 'mint'] ['fruity', 'rose', 'violetflower'] ['citrus', 'floral'] ['fresh', 'mushroom', 'green', 'chemical', 'seafood', 'terpenic'] ['citrus', 'fresh'] ['fresh', 'geranium', 'fruity', 'citrus', 'rose'] ['apple', 'dry', 'fatty', 'lemon', 'pear', 'rose', 'waxy'] ['honey', 'balsamic', 'resinous', 'rose', 'floral', 'lily'] ['fresh', 'rose'] ['tropicalfruit', 'fruity', 'honey', 'rose'] ['balsamic', 'fruity'] ['floral', 'woody'] ['lactonic', 'plum', 'tropicalfruit'] ['medicinal', 'balsamic', 'earthy', 'resinous', 'camphor'] ['camphor', 'coniferous', 'cooling'] ['orange', 'woody', 'grapefruit'] ['grapefruit'] ['animalic', 'musk'] ['ambrette', 'animalic', 'musk', 'vegetable'] ['blackcurrant', 'cooked', 'alliaceous', 'roasted', 'meat', 'gourmand'] ['alliaceous', 'meat', 'roasted'] ['rose', 'woody', 'fruity'] ['woody'] ['fatty', 'powdery', 'seafood', 'musk', 'chemical'] ['dry', 'fatty', 'musk', 'sweet', 'waxy'] ['grass', 'cucumber', 'cooked', 'rancid', 'fruity'] ['fatty', 'fruity', 'mushroom'] ['sweet', 'fresh', 'fruity', 'alcoholic', 'butter'] ['ethereal', 'fermented', 'fresh'] ['metallic', 'ripe', 'vegetable', 'sulfuric', 'tropicalfruit'] ['berry', 'dry', 'pungent'] ['chemical', 'coffee', 'meat', 'alliaceous'] ['alliaceous', 'gourmand', 'meat', 'sharp'] ['fruity', 'berry', 'plum'] ['floral', 'woody'] ['vegetable', 'alliaceous'] ['alliaceous', 'cooked']

Generating test predictions¶

test_df = pd.read_csv("/content/data/test.csv")

mols = [Chem.MolFromSmiles(smile) for smile in test_df["SMILES"].tolist()]

feat = dc.feat.ConvMolFeaturizer()#dc.feat.CircularFingerprint(size=1024)

test_arr = feat.featurize(mols)

print(test_arr.shape)

(1079,)

test_dataset = dc.data.NumpyDataset(X=test_arr, y=np.zeros((len(test_df),109)))

print(test_dataset)

<NumpyDataset X.shape: (1079,), y.shape: (1079, 109), w.shape: (1079, 1), task_names: [ 0 1 2 ... 106 107 108]>

y_pred = model.predict(test_dataset)

top_5_preds=[]

c=0

# print(y_true.shape,y_pred.shape)

for i in range(y_pred.shape[0]):

final_pred = []

prob_val = []

for y in range(109):

prediction = y_pred[i,y]

if prediction[1]>0.30:

final_pred.append(1)

prob_val.append(prediction[1])

else:

final_pred.append(0)

smell_ids = np.where(np.array(final_pred)==1)

smells = [vocab[k] for k in smell_ids[0]]

#smells = [smells[k] for k in np.argsort(np.array(prob_val))] #to further order based on probability

if len(smells)==0:

c+=1

if len(smells)>15:

smells = smells[:15]

else:

new_smells = [x for x in top_15 if x not in smells]

smells.extend(new_smells[:15-len(smells)])

assert len(smells)==15

sents = []

for sent in range(0,15,3):

sents.append(",".join([x for x in smells[sent:sent+3]]))

pred = ";".join([x for x in sents])

top_5_preds.append(pred)

print(len(top_5_preds))

print("[info] did not predict for ",c)

1079 [info] did not predict for 3

final = pd.DataFrame({"SMILES":test_df.SMILES.tolist(),"PREDICTIONS":top_5_preds})

final.head()

| SMILES | PREDICTIONS | |

|---|---|---|

| 0 | CCC(C)C(=O)OC1CC2CCC1(C)C2(C)C | balsamic,camphor,cedar;coniferous,fruity,resin... |

| 1 | CC(C)C1CCC(C)CC1OC(=O)CC(C)O | cooling,fruity,mint;floral,woody,herbal;green,... |

| 2 | CC(=O)/C=C/C1=CCC[C@H](C1(C)C)C | berry,cedar,fruity;powdery,violetflower,woody;... |

| 3 | CC(=O)OCC(COC(=O)C)OC(=O)C | apple,banana,ester;fruity,odorless,floral;wood... |

| 4 | CCCCCCCC(=O)OC/C=C(/CCC=C(C)C)\C | cognac,floral,fruity;geranium,rose,waxy;woody,... |

final.to_csv("submission_deepchem_graph_conv_0.3.csv",index=False)

This submission gives a score of 0.277 on the leaderboard.

MultiTaskClassifier¶

Next we will use the MultiTaskClassifier of deepchem, with the featurizer CircularFingerprint.

The model accepts the following different featurizers.

- CircularFingerprint

- RDKitDescriptors

- CoulombMatrixEig

- RdkitGridFeaturizer

- BindingPocketFeaturizer

- ElementPropertyFingerprint

Feel free to substitute any one of these, and do report back your results and findings!

mols = [Chem.MolFromSmiles(smile) for smile in data_df["text"].tolist()]

feat = dc.feat.CircularFingerprint(size=1024)

arr = feat.featurize(mols)

print(arr.shape)

(4316, 1024)

labels = []

train_df = pd.read_csv("data/train.csv")

for x in train_df.SENTENCE.tolist():

labels.append(np.array(get_ohe_label(x)))

labels = np.array(labels)

print(labels.shape)

(4316, 109)

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(arr,labels, test_size=.1, random_state=42)

train_dataset = dc.data.NumpyDataset(X=X_train, y=y_train)

val_dataset = dc.data.NumpyDataset(X=X_test, y=y_test)

print(train_dataset)

<NumpyDataset X.shape: (3884, 1024), y.shape: (3884, 109), w.shape: (3884, 1), task_names: [ 0 1 2 ... 106 107 108]>

print(val_dataset)

<NumpyDataset X.shape: (432, 1024), y.shape: (432, 109), w.shape: (432, 1), ids: [0 1 2 ... 429 430 431], task_names: [ 0 1 2 ... 106 107 108]>

The MultitaskClassifier, is just a stack of dense layers. But that still leaves a lot of options. How many layers should there be, and how wide should each one be? What dropout rate should we use? What learning rate?

These are called hyperparameters. DeepChem provides a selection of hyperparameter optimization algorithms, which are found in the dc.hyper package. We use GridHyperparamOpt, which is the most basic method. We just give it a list of options for each hyperparameter and it exhaustively tries all combinations of them.

import numpy as np

import numpy.random

params_dict = {'layer_sizes': [[500], [1000], [1000, 1000]],

'dropouts': [0.2, 0.5],

'learning_rate': [0.001, 0.0001] ,

'n_tasks': [109],

'n_features': [1024]

}

optimizer = dc.hyper.GridHyperparamOpt(dc.models.MultitaskClassifier)

metric = dc.metrics.Metric(dc.metrics.jaccard_score)

best_model, best_hyperparams, all_results = optimizer.hyperparam_search(

params_dict, train_dataset, val_dataset, [], metric)

/usr/local/lib/python3.6/dist-packages/sklearn/metrics/_classification.py:1272: UndefinedMetricWarning: Jaccard is ill-defined and being set to 0.0 due to no true or predicted samples. Use `zero_division` parameter to control this behavior. _warn_prf(average, modifier, msg_start, len(result))