Activity

Challenge Categories

Challenges Entered

Evaluate Natural Conversations

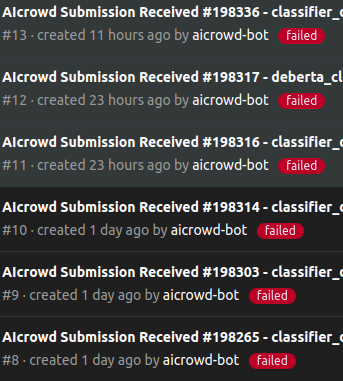

Latest submissions

Advanced Building Control & Grid-Resilience

Latest submissions

Small Object Detection and Classification

Latest submissions

A benchmark for image-based food recognition

Latest submissions

Using AI For Building’s Energy Management

Latest submissions

See All| graded | 199384 | ||

| graded | 199377 | ||

| failed | 199371 |

Interactive embodied agents for Human-AI collaboration

Latest submissions

See All| graded | 205297 | ||

| graded | 205296 | ||

| graded | 205295 |

Specialize and Bargain in Brave New Worlds

Latest submissions

Latest submissions

See All| graded | 182993 |

Latest submissions

Latest submissions

Use an RL agent to build a structure with natural language inputs

Latest submissions

See All| graded | 199988 | ||

| graded | 199644 | ||

| failed | 199636 |

Language assisted Human - AI Collaboration

Latest submissions

See All| graded | 205297 | ||

| graded | 205296 | ||

| graded | 205295 |

| Participant | Rating |

|---|---|

Chemago

Chemago

|

0 |

ChunFu

ChunFu

|

0 |

danish_gamer

danish_gamer

|

0 |

| Participant | Rating |

|---|---|

Chemago

Chemago

|

0 |

ludwigbald

ludwigbald

|

0 |

nutsu_shiman

nutsu_shiman

|

0 |

NeurIPS 2022 IGLU Challenge

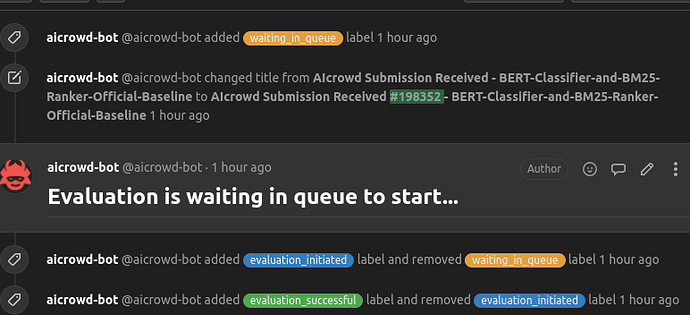

Do you see submissions fail at the ranker after the last fix?

Over 3 years agoIt has failed again. @dipam note that it does not even show logs for the validation parts, it does not show any log at all. Although it would be nice to know what is going on with the ranker.

Do you see submissions fail at the ranker after the last fix?

Over 3 years agoHere is my new submission AIcrowd

And thank you very much,

Do you see submissions fail at the ranker after the last fix?

Over 3 years agoThank you Dipam. Sorry for the confusion. I am resubmitting and I will let you know, but in any case it is weird because the new submit is essentially an old one ( that run successfully and as far as I can tell does not hardcode anything) with different model weights.

Do you see submissions fail at the ranker after the last fix?

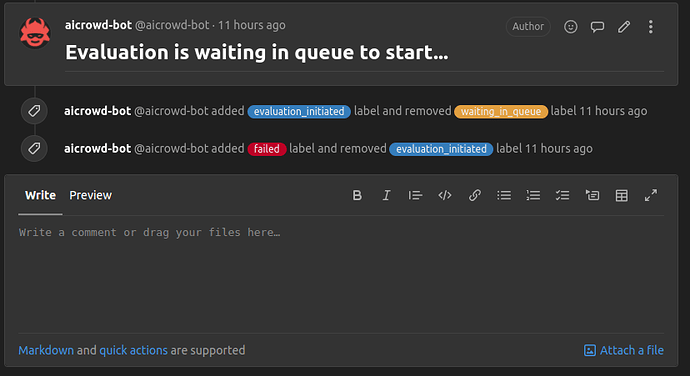

Over 3 years agoHI I am still seeing failures as before. It fails at ranker evaluation and then no logs are shown. Is this happening to anyone else? Maybe it is an error that is only occurring now for certain evaluations?

For example see #204968 which I believe was resubmited from the host side after the fix.

Getting run failures without any logs

Over 3 years agoExcellent thank you. Luckily I corrected the yml. When running jupyter-repo2docker in my computer I have no need of adding gcc manually for the docker to build. Maybe I have a different version of repo2docker

Getting run failures without any logs

Over 3 years agoI just ran the baseline notebook. It does not fail. But it does not show any logs.

Getting run failures without any logs

Over 3 years agoHi, I have tried more than 5 different submissions in the past two days and I am getting failures without any login message to debug.

How the world state can be parsed/ visualized

Almost 4 years agoHi in the competition description it says " More information to follow on how the world state can be parsed/ visualized. " . And I need some clarification on what the actions and the observables mean.

In particular looking at the official baseline it claims

gridworld_state - Internal state from the iglu-gridworld simulator corresponding to the instuction

NOTE: The state will only contain the "avatarInfo" and "worldEndingState"

So why is the tape included in the steps files

NeurIPS 2022: CityLearn Challenge

Critical infromation mising from building info

Almost 4 years agoHi @kingsley_nweye @dipam have you checked this? It seems that some information is being provided in the local evaluation that is not being handed to the modelo on-line.

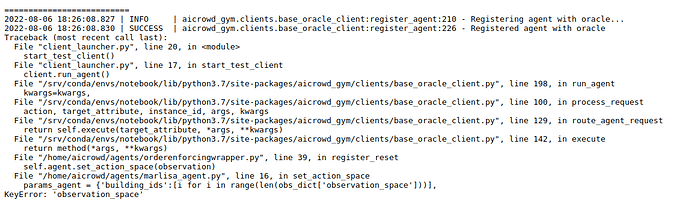

Multi agent coordinator and orderenforcingwrapper

Almost 4 years agoI also detected the same thing is happening with observation_spaces.

It was never added there.

Multi agent coordinator and orderenforcingwrapper

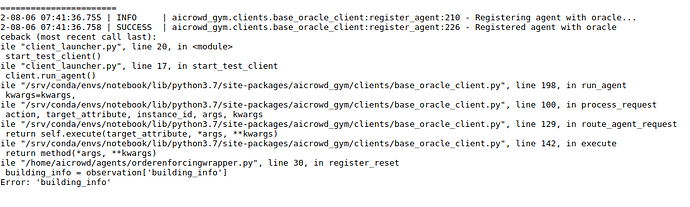

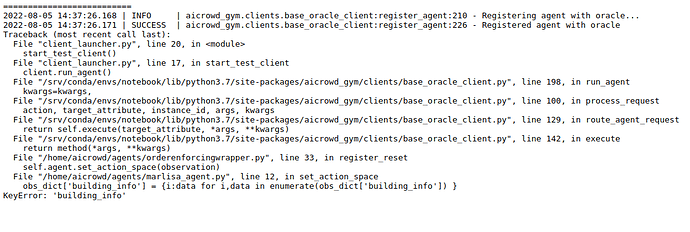

Almost 4 years agoAfter doing the test I can corroborate that the online evaluation is not passing building_info to the agent.

Critical infromation mising from building info

Almost 4 years agoI think building_info is not being passed in the online evaluation.

I am of the opinion that we should be able to grab it for compatibility with existing agents in the citylearn repo

Multi agent coordinator and orderenforcingwrapper

Almost 4 years agoHi @kingsley_nweye thank you for adding the building_info to the local_evaluation script.

I am trying to run Marlisa which uses building_info but I think that in production your evaluation is not passing along the building info

this is the log I am getting

I am running thi code to duble check as soon as I get my submissions count refreshed. I will put it in the ordering wrapper to catch the bug in online evaluation

def register_reset(self, observation):

"""Get the first observation after env.reset, return action"""

action_space = observation["action_space"]

self.action_space = [dict_to_action_space(asd) for asd in action_space]

obs = observation["observation"]

self.num_buildings = len(obs)

#CHECK MISSING INFO

# I check that the dictionary contains the building_info.

building_info = observation['building_info']

for agent_id in range(self.num_buildings):

action_space = self.action_space[agent_id]

# self.agent.set_action_space(agent_id, action_space)

self.agent.set_action_space(observation)

return self.compute_action(obs)

Multi agent coordinator and orderenforcingwrapper

Almost 4 years agoThank you very much @dipam . And I have another question are we guaranteed that the observation spaces are the same for all buildings?

Because if not I think , in the evaluation script, we should receive the observation_spaces (as well as the action spaces) and building information as in citylearn repo main examples

See

# Contain the lower and upper bounds of the states and actions, to be provided to the agent to normalize the variables between 0 and 1.

# Can be obtained using observations_spaces[i].low or .high

env = CityLearn(**params)

observations_spaces, actions_spaces = env.get_state_action_spaces()

# Provides information on Building type, Climate Zone, Annual DHW demand, Annual Cooling Demand, Annual Electricity Demand, Solar Capacity, and correllations among buildings

building_info = env.get_building_information()

Multi agent coordinator and orderenforcingwrapper

Almost 4 years ago@kingsley_nweye no need to implement this as long as I can change orderenforcingwrapper.py it will be OK.

I saw @dipam answered on Dicord that we can manipulate the file as long as we respect the shape of the output.

Multi agent coordinator and orderenforcingwrapper

Almost 4 years agoHi @kingsley_nweye.Sorry. I am referring to a single coordinator, or as you put it a ‘multi agent-cooordinator’ . It should receive the information on the observations/action space for all the buildings.

Multi agent coordinator and orderenforcingwrapper

Almost 4 years agoHi, after looking at the local_evaluation.py code. It looks like one would have to modify the OrderEnforcingWrapper so as to pass all the information through to a multi-agent coordinator.

On the other hand OrderEnforcingWrapper has this in its docstring

TRY NOT TO CHANGE THIS

So can we change this? or should we find another way to pass the whole information to a coordinator agent?

AR

AR

Do you see submissions fail at the ranker after the last fix?

Over 3 years agoThank you, In my case it fails at clariq ranker and does not provide logs from any steps. I have ran a very similar code with weights of the same size before successfully so all the packages being equal it should not be OOM.