Location

CH

CH

Badges

Activity

Challenge Categories

Challenges Entered

Machine Learning for detection of early onset of Alzheimers

Latest submissions

See All| graded | 134942 | ||

| graded | 134940 | ||

| graded | 134909 |

5 Problems 15 Days. Can you solve it all?

Latest submissions

See All| graded | 67302 | ||

| graded | 67300 | ||

| graded | 67285 |

PlantVillage is built on the premise that all knowledge that helps people grow food should be openly accessible to anyone on the planet.

Latest submissions

| Participant | Rating |

|---|

| Participant | Rating |

|---|

ADDI Alzheimers Detection Challenge

Negative submission counts

About 5 years agoHi,

I accidentally performed submissions when I had no submissions left. It didn’t proceed the submission, but it now shows that I have negative amount of submissions left. When the reset happened, it didn’t went back to zero but increased and stayed negative so now I need to wait even more before submitting, even though no submission was really evaluated.

Is this behavior expected? Or is it a bug?

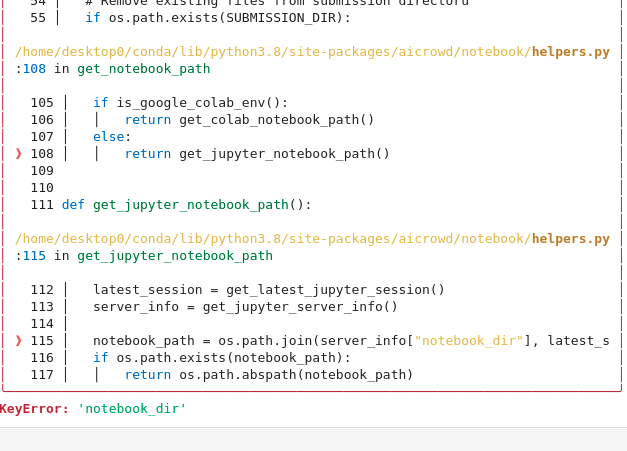

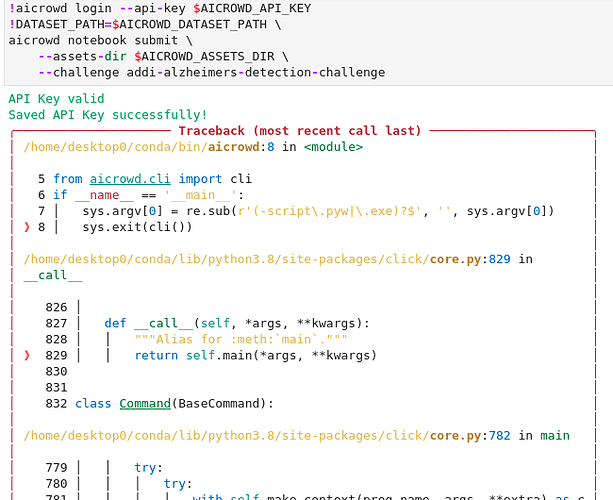

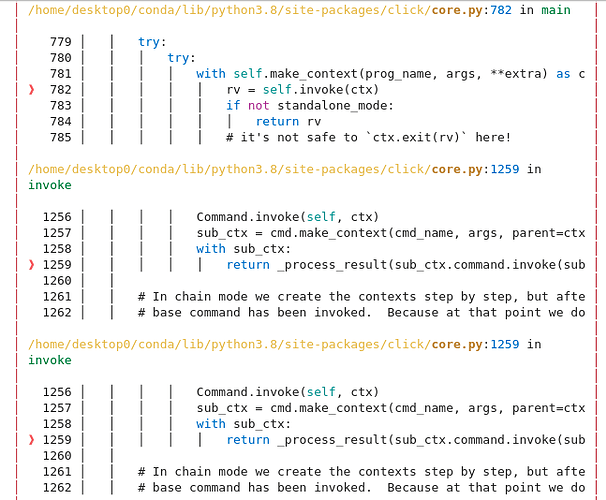

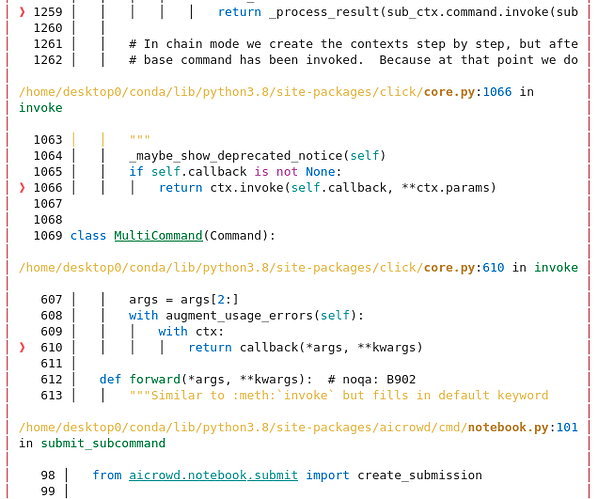

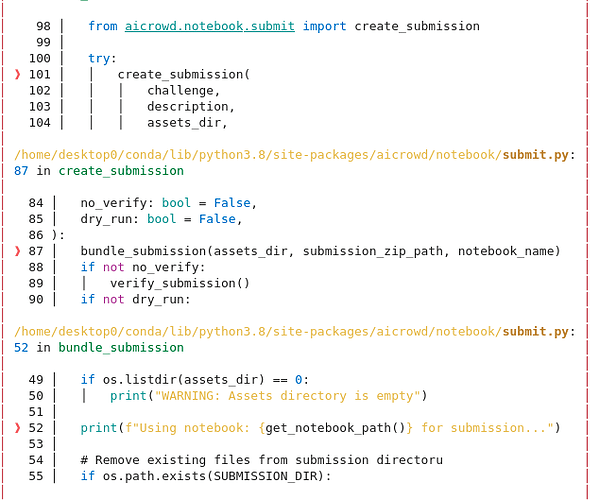

KeyError: "notebook_dir" when submitting

About 5 years agoI already had this line in my notebook (with an additonal -q flag), but re-running it solved the issue

Thanks for your help!

KeyError: "notebook_dir" when submitting

About 5 years agoHi,

I am getting this error when trying to submit my notebook. Sorry for the split trace, I didn’t find any better way to get it.

Any idea why this is happening? Anyone else encountering this issue?

Web browser & Jupyter-lab in linux virtual machine?

About 5 years agoI think you should be able to use the link provided in the pre-installed Firefox to access jupyter-lab. No idea why your machine thinks you don’t have a browser though…

Cryptic submission failed message

About 5 years agoHi @jyotish and thank you for your help!

For anyone interested, it seems to be an out-of-memory issue because the evaluation pods have only 4Go of RAM.

I think it would make sense to have the same hardware capabilities on the training and evaluation machines, as you need to be able to load your models/assets on both right? It feels weird to me to be allowed to develop with 14Go but to test only with 4Go. What do you think?

Cryptic submission failed message

About 5 years agoHi,

My latest submissions (133671) are being rejected but I can’t find any sensible reason why. It failed in the “generate predictions” step and here are the logs I get:

Selecting runtime language: python

[NbConvertApp] Converting notebook predict.ipynb to notebook

[NbConvertApp] Executing notebook with kernel: python

[NbConvertApp] Writing 17040 bytes to predict.nbconvert.ipynb

Any idea what went wrong? Is it a timeout issue or out-of-memory error of some kind?

DIBRD

Use of external data

About 6 years agoThanks for all the answers and also for organizing this event!

Looking forward to the publication of the guidelines!

Cheers

Use of external data

About 6 years agoThanks for the extensive answer! I’m glad you are seeing it the same way I do

A few more questions that are more or less linked to this:

-

Is an algorithmic solution (i.e. involving no learning/AI/ML) acceptable? I’m thinking mostly about the PKHND challenge, were it’s obvious that it can be solved without ML

-

Regarding the publication of solutions, does that mean the solutions will be tested for reproducibility? And if yes, is 100% reproducibility necessary for a solution to be acceptable?

-

How will the publication of the solutions work? Through posts in the discussions, Github repos and/or something else?

Use of external data

About 6 years agoI was wondering if the usage of external data is allowed for this challenge (and the other challenges of the Blitz as well)

If I were to find the annotated dataset online, it would be fairly easy to overfit the test set and get 100% F1 score using it, although not very interesting… Would something like this be allowed? Or does this qualify as “unfair activities”?

Negative submission counts

About 5 years agoHere is what I’m seeing in the submissions tab.