Activity

Challenge Categories

Challenges Entered

Automating Building Data Classification

Latest submissions

See All| graded | 280014 | ||

| graded | 279563 | ||

| graded | 279562 |

Improve RAG with Real-World Benchmarks

Latest submissions

See All| failed | 266505 | ||

| graded | 266503 | ||

| graded | 266497 |

Revolutionising Interior Design with AI

Latest submissions

See All| graded | 251762 | ||

| graded | 251753 | ||

| graded | 251702 |

Multi-Agent Dynamics & Mixed-Motive Cooperation

Latest submissions

See All| graded | 243898 | ||

| failed | 242953 | ||

| failed | 242945 |

Specialize and Bargain in Brave New Worlds

Latest submissions

See All| submitted | 246741 | ||

| submitted | 246661 | ||

| submitted | 246539 |

Trick Large Language Models

Latest submissions

Shopping Session Dataset

Latest submissions

Small Object Detection and Classification

Latest submissions

See All| graded | 240507 | ||

| graded | 240506 | ||

| graded | 240490 |

Understand semantic segmentation and monocular depth estimation from downward-facing drone images

Latest submissions

See All| submitted | 218884 | ||

| graded | 218883 | ||

| submitted | 218875 |

Identify user photos in the marketplace

Latest submissions

See All| graded | 210413 | ||

| failed | 210389 | ||

| graded | 210283 |

A benchmark for image-based food recognition

Latest submissions

See All| graded | 181873 | ||

| graded | 181872 | ||

| graded | 181870 |

Using AI For Building’s Energy Management

Latest submissions

See All| graded | 205123 | ||

| failed | 204464 | ||

| failed | 204102 |

What data should you label to get the most value for your money?

Latest submissions

Interactive embodied agents for Human-AI collaboration

Latest submissions

Improving the HTR output of Greek papyri and Byzantine manuscripts

Latest submissions

Machine Learning for detection of early onset of Alzheimers

Latest submissions

A benchmark for image-based food recognition

Latest submissions

5 Puzzles, 3 Weeks. Can you solve them all? 😉

Latest submissions

Project 2: Road extraction from satellite images

Latest submissions

Project 2: build our own text classifier system, and test its performance.

Latest submissions

5 PROBLEMS 3 WEEKS. CAN YOU SOLVE THEM ALL?

Latest submissions

Predict if users will skip or listen to the music they're streamed

Latest submissions

5 puzzles and 1 week to solve them!

Latest submissions

Latest submissions

Estimate depth in aerial images from monocular downward-facing drone

Latest submissions

See All| submitted | 218884 | ||

| graded | 218883 | ||

| graded | 218801 |

Perform semantic segmentation on aerial images from monocular downward-facing drone

Latest submissions

See All| submitted | 218875 | ||

| graded | 218874 | ||

| submitted | 218871 |

Commonsense Dialogue Response Generation

Latest submissions

See All| graded | 252068 | ||

| graded | 250634 | ||

| graded | 250621 |

Commonsense Persona Knowledge Linking

Latest submissions

See All| graded | 250633 | ||

| graded | 250629 | ||

| failed | 250626 |

Testing RAG Systems with Limited Web Pages

Latest submissions

See All| graded | 265880 | ||

| failed | 265828 | ||

| graded | 265758 |

Create Videos with Spatially Aligned Stereo Audio

Latest submissions

| Participant | Rating |

|---|---|

dhanya_bahadur

dhanya_bahadur

|

0 |

| Participant | Rating |

|---|---|

gaurav_singhal

gaurav_singhal

|

0 |

-

nebula Food Recognition Benchmark 2022View

-

Sneaky_Ninjas NeurIPS 2022: CityLearn ChallengeView

-

925ers Visual Product Recognition Challenge 2023View

-

gs-sai Scene Understanding for Autonomous Drone Delivery (SUADD'23)View

-

WeekendWarriors MeltingPot Challenge 2023View

-

MoonWalkers Commonsense Persona-Grounded Dialogue Challenge 2023View

-

RAGnarok_Retrievers Meta Comprehensive RAG Benchmark: KDD Cup 2024View

Brick by Brick 2024-bc2191

📕 Community Contribution Baseline

Over 1 year agoThis baseline toolkit has been designed to help you improve your submission for Round 2 of the Brick-by-Brick Challenge.

Getting Started

The baseline is built on the open-source library tslib. Follow these steps to get started:

-

Clone the Repository: Clone the baseline repository.

-

Install Required Dependencies: Set up the necessary dependencies using the instructions in the repository.

-

Review the README: The README file contains a comprehensive guide on using the toolkit, including details on its features and submission workflow.

-

Use the Colab Notebook: The Colab Notebook offers step-by-step instructions to help you explore the toolkit and prepare your submissions.

Key Features

-

Preprocessing Pipeline

The baseline enables you to preprocess your data using the 4-hour sampling method. -

Extensibility for Future Enhancements

The toolkit is a foundation that participants can build upon. Some potential improvements include:- Adding new models to expand capabilities.

- Enhancing loss functions for better optimisation.

- Leveraging the hierarchical structure of classes to improve inference efficiency.

- Refining preprocessing techniques.

Have more questions? Drop them in the comment below.

All the best!

Same predictions of the model at the inference

Over 1 year agoFor the Brick by Brick competition, I am trying to train a multi-label classification notebook in Tsai. At the inference, I consistently receive the same predictions. I am not sure why, but I have tried a variety of preprocessing techniques as well, but the model consistently makes the same prediction regardless of the input. Anybody faced this?

Meta Comprehensive RAG Benchmark: KDD Cup 2-9d1937

Client Failed

Almost 2 years agoYou can try it, but I just pinned it to the original version based on the russwest404 suggestion.

Client Failed

Almost 2 years agoYeah, its resolved after removing langchain and pinning the vllm version to 0.4.2

Client Failed

Almost 2 years agoThanks, I read this in Reddit as well. I will try without langchain.

Client Failed

Almost 2 years agoError in create_agent: Timed out after 601 seconds waiting for clients. 1/4 clients joined.

Any idea on why it is coming? Is it related my code or from aicrowd server failure?

Can we have multi GPU submission example?

Almost 2 years agoIs there a baseline for multi-GPU submission via DDP or accelerate?

Gitlab Dev Ops issue

Almost 2 years agoThe Gitlab issue page is not auto fetching by default. We have to refresh every time to see the new Evaluation Logs changes in the issue page

Couldn't connect to gitlab

Almost 2 years agogit push

kex_exchange_identification: Connection closed by remote host

Connection closed by 52.72.8.30 port 22

fatal: Could not read from remote repository.

Please make sure you have the correct access rights

and the repository exists.

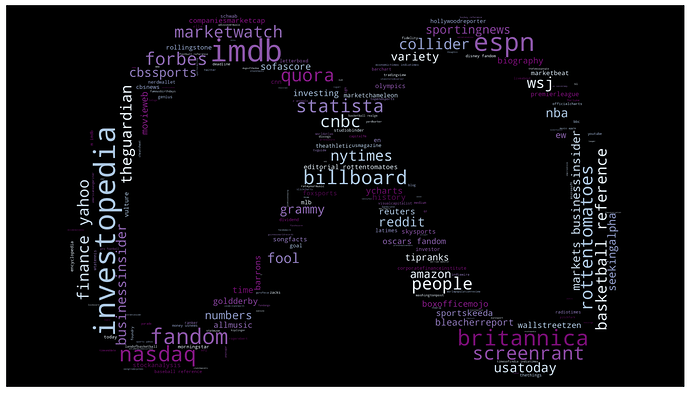

Can we assume the same websites in both the test and training datasets?

About 2 years agoCan we assume these same websites in both the test and training datasets?

Meta KDD Cup 24 - CRAG - Retrieval Summarization

Are these evaluation qa values present in qa.json correct?

About 2 years agoThe evaluation dataset questions consists of stock prices, but none of the answers are accurate as of Feb 16th, which is the last date given in the dataset. I used Nasdaq and MarketWatch to test the prices on that day, but none of them matched.

you can check the closing price on https://www.nasdaq.com/market-activity/stocks/tfx

For example, the closing price on feb16th is 251.07$ but the answer is given as 249.07$

“interaction_id”: 0,

“query”: “what’s the current stock price of Teleflex Incorporated Common Stock in USD?”,

“answer”: “249.07 USD”

Generative Interior Design Challenge 2024

Top teams solutions

About 2 years ago![]() Nice use of an external dataset and converting it to this challenge format. I did explore different variations at the time of inference using prompt engineering. I used a better segmentation model (swin-base-IN21k) and modified the control items with pillars as well for better geometry along with different prompt engineering techniques. Even though baseline gave me a better score, it is really inconsistent. Finally, I submitted a realistic vision model from Comfort UI, which gave stable and consistent results, and based on the human evaluations I did expect some kind of randomness in the leaderboard. I would like to express my gratitude to the organizers of this challenge. The challenge is new and exciting, but because there are only 40 images in the test dataset, the human evaluations are much worse and inconsistent. It was really fun exploring stable diffusion models and their adapters. When I have more processing power, I want to work on this in the near future.

Nice use of an external dataset and converting it to this challenge format. I did explore different variations at the time of inference using prompt engineering. I used a better segmentation model (swin-base-IN21k) and modified the control items with pillars as well for better geometry along with different prompt engineering techniques. Even though baseline gave me a better score, it is really inconsistent. Finally, I submitted a realistic vision model from Comfort UI, which gave stable and consistent results, and based on the human evaluations I did expect some kind of randomness in the leaderboard. I would like to express my gratitude to the organizers of this challenge. The challenge is new and exciting, but because there are only 40 images in the test dataset, the human evaluations are much worse and inconsistent. It was really fun exploring stable diffusion models and their adapters. When I have more processing power, I want to work on this in the near future.

🏆 Generative Interior Design Challenge: Top 3 Teams

About 2 years ago@lavanya_nemani you can not judge the test dataset’s performance based on 3 public images. As the scores are really close, the preference of annotators can change a little bit.

🏆 Generative Interior Design Challenge: Top 3 Teams

About 2 years agoCongratulations to the winners! Also post this in discord, we have no idea that this post exists.

Build fail -- Ephemeral storage issue

About 2 years ago@lavanya_nemani The maximum size your submission can have is 10GB. So keep only maximum of 10GB in submission tag. Check your models folder and delete unused files. The repo can have more than 10Gb and you don’t need to delete them.

Submission stuck at intermediate state

About 2 years agoMy submission with Id #251530 evaluation was complete, but it got stuck and did not proceed to the human evaluation phase.

Notebooks

-

Generative Interior Design Submission using Colab Generative Interior Design Submission notebook using Colab's Free versionsaidinesh_pola· About 2 years ago

Generative Interior Design Submission using Colab Generative Interior Design Submission notebook using Colab's Free versionsaidinesh_pola· About 2 years ago -

🦟Mosquito-Classification This notebook is for improving classification of mosquitoes detection of starter notebook.saidinesh_pola· Over 2 years ago

🦟Mosquito-Classification This notebook is for improving classification of mosquitoes detection of starter notebook.saidinesh_pola· Over 2 years ago

Bangalore, IN

Bangalore, IN

📕 Community Contribution Baseline

Over 1 year agoYes, for downloading the dataset you need it. You can pass it using

!aicrowd login <api key>you can also download the dataset manually.